|

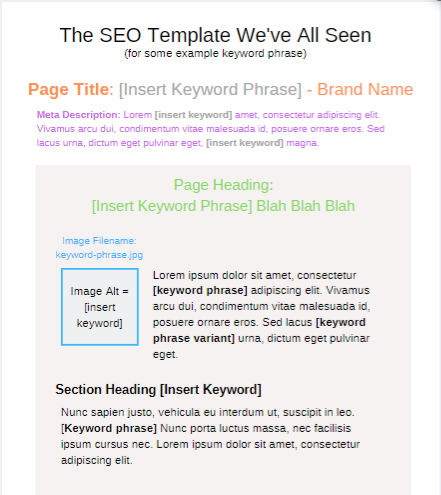

If content is queen, and the critical role SEO plays a role of bridging the two to drive growth, then there’s no question as to whether or not keyword research is important. However, connecting the dots to create content that ranks well can be difficult. What makes it so difficult? How do you go from a target keyword phrase and write an article that is unique, comprehensive, encompasses all the major on-page SEO elements, touches the reader, and isn’t structured like the “oh-so-familiar” generic SEO template?

There’s no one size fits all approach! However, there is a simple way to support any member of your editorial, creative writing, or content team in shaping up what they need in order to write SEO-friendly content, and that’s an SEO content brief. Key benefits of a content brief:

So the rest of this article will cover how we actually get there & we’ll use this very article as an example:

Any good editor will tell you great content comes from having a solid content calendar with topics planned in advance for review and release at a regular cadence. To support topical analysis and themes as SEOs we need to start with keyword research. Start with keyword research: Topic, audience, and objectivesThe purpose of this guide isn’t to teach you how to do keyword research. It’s to set you up for success in taking the step beyond that and developing it into a content brief. Your primary keywords serve as your topic themes, but they are also the beginning makings of your content brief, so try to ensure you:

How does all this help in supporting a content brief?You and your team can get answers to the key questions mentioned below.

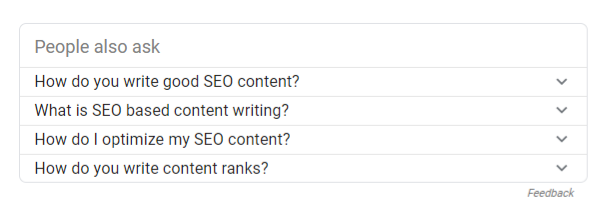

Now with keywords as our guide to overall topical themes, we can focus on the next step, topical expansion. Topical expansion: Define key points and gather questionsWriters need more than keywords, they require insight into the pain points of the reader, key areas of the topic to address and most of all, what questions the content should answer. This too will go into your content brief. We’re in luck as SEOs because there is no shortage of tools that allow us to gather this information around a topic. For example, let’s say this article focuses on “SEO writing”. There are a number of ways to expand on this topic.

You’ve taken note of what to write about, and how to cover the topic fully. But how do we begin to determine what type of content and how in-depth it should be? Content and SERP analysis: Specifying content type and formatOkay, so we’re almost done. We can’t tell writers to write unique content if we can’t specify what makes it unique. Reviewing the competition and what’s being displayed consistently in the SERP is a quick way to assess what’s likely to work. You’ll want to look at the top ten results for your primary topic and collect the following:

Content brief development: Let’s make beautiful content togetherNow you’re ready to prepare your SEO content brief which should include the following:

[Note: If/when using internally, consider making part of the content request process, or a template for the editorial staff. When using externally be sure to include where the content will be displayed, format/output, specialty editorial guidance.] Template and toolsWant to take a shortcut? Feel free to download and copy my SEO content brief template, it’s a Google doc. Other content brief templates/resources:

If you want to streamline the process as a whole, MarketMuse provides a platform that manages the keyword research, topic expansion, provides the questions, and manages the entire workflow. It even allows you to request a brief, all in one place. I only suggest this for larger organizations looking to scale as there is an investment involved. You’d likely also have to do some work to integrate into your existing processes. Jori Ford is Sr. Director of Content & SEO at G2Crowd. She can also be found on Twitter @chicagoseopro. The post SEO writing guide: From keyword to content brief appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/04/16/seo-writing-guide-from-keyword-to-content-brief/

4 Comments

YouTube is not just a social media platform. It’s a powerful search engine for video content. Here’s how to make the most of its SEO potential. There are more than 1.9 billion users who use YouTube every month. People are spending over a billion hours watching videos every day on YouTube. This means that there is a big opportunity for brands, publishers and video creators to expand their reach. Search optimization is not just for your site’s content. YouTube can have its own best practices around SEO and it’s good to keep up with the most important ones that can improve your ranking. How can you improve your SEO on YouTube? We’ve organized our advanced YouTube SEO tactics into three key areas:

Advanced YouTube SEO tips to drive more traffic and improved rankingsKeyword researchIt’s not enough to create the right content if you don’t get new viewers to actually watch it. Keywords can actually help you understand how to link your video with the best words to describe it. They can make it easier for viewers to discover your content and they also help search engines match the content with the search queries and their relevance. A video keyword research can help you discover new content opportunities while you can also improve your SEO. A quick way to find popular keywords for the content you have in mind is to start searching on YouTube’s search bar. The auto-complete feature will highlight the most popular keywords around your topic. You can also perform a similar search in Google to come up with more suggestions for the best keywords.

If you’re serious about keyword research and need to find new ideas, you can use additional online tools that will provide with a list of keywords to consider. When it comes to picking the best keywords, you don’t need to aim for the most obvious choice. You can start with the keywords that are low in competition and aim to rank for them. Moreover, it’s good to keep in mind that YouTube is transcribing all your videos. If you want to establish your focus keywords you can include them in your actual video by mentioning throughout your talking. This way you’re helping YouTube understand the contextual relevance of your content along with your keywords. Recap

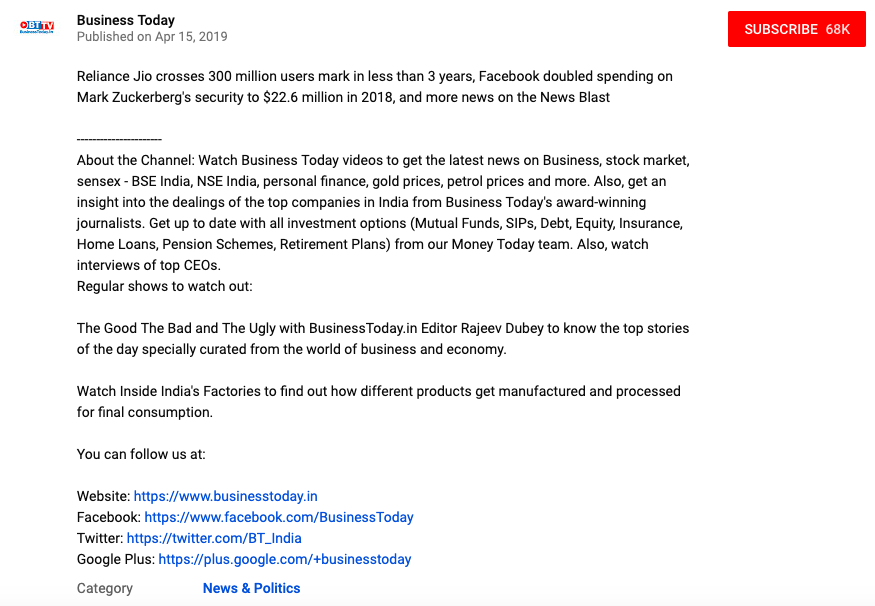

Content optimizationThere are many ways to improve the optimization of your content and here are some key tips to keep in mind: 1. Description

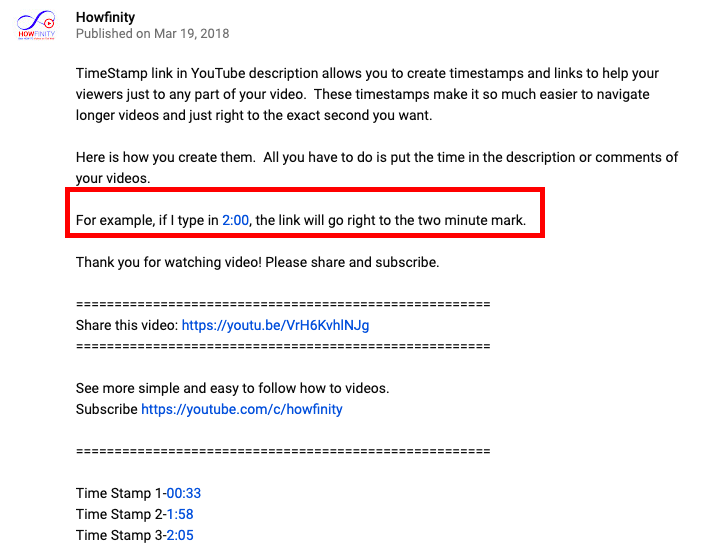

Your description should facilitate the search for relevant content. A long description helps you provide additional context to your video. It can also serve as an introduction to what you’re going to talk about. As with blog posts, a longer description can grant you the space to expand your thoughts. Start treating your videos as the starting point and add further details about them in the description. If your viewers are genuinely interested in your videos then they will actually search for additional details in your description. 2. Timestamp

More videos are adding timestamps in their description. This is a great way to improve user experience and engagement. You are helping your viewers to find exactly what they are looking for, which increases the chances of keeping them coming back. 3. Title and keywords Keywords are now clickable in titles. This means that you are increasing the chances of boosting your SEO by being more creative with your titles. Be careful not to create content just for search engines though, always start by creating content that your viewers would enjoy. 4. Location If you want to tap into local SEO then it’s a good idea to include your location in your video’s copy. If you want to create videos that are targeting local viewers then it’s a great starting point for your SEO strategy. 5. Video transcripts Video transcripts make your videos more accessible. They also make it easier for search engines to understand what the video is about. Think of the transcript as the process that makes the crawling of your content easier. There are many online options to create your video transcripts so it shouldn’t be a complicated process to add them to your videos. EngagementEngagement keeps gaining ground when it comes to YouTube SEO. It’s not enough to count the number of views if your viewers are not engaging with your content. User behavior helps search engines understand whether your content is useful or interesting for your viewers to rank it accordingly. Thus, it’s important to pay attention to these metrics:

Learning from the bestA good tip to understand YouTube SEO is to learn from the best by looking at the current most popular videos. You can also search for topics that are relevant to your channel to spot how your competitors are optimizing their titles, their keywords, and how thumbnails and descriptions can make it easier to click on one video over another.

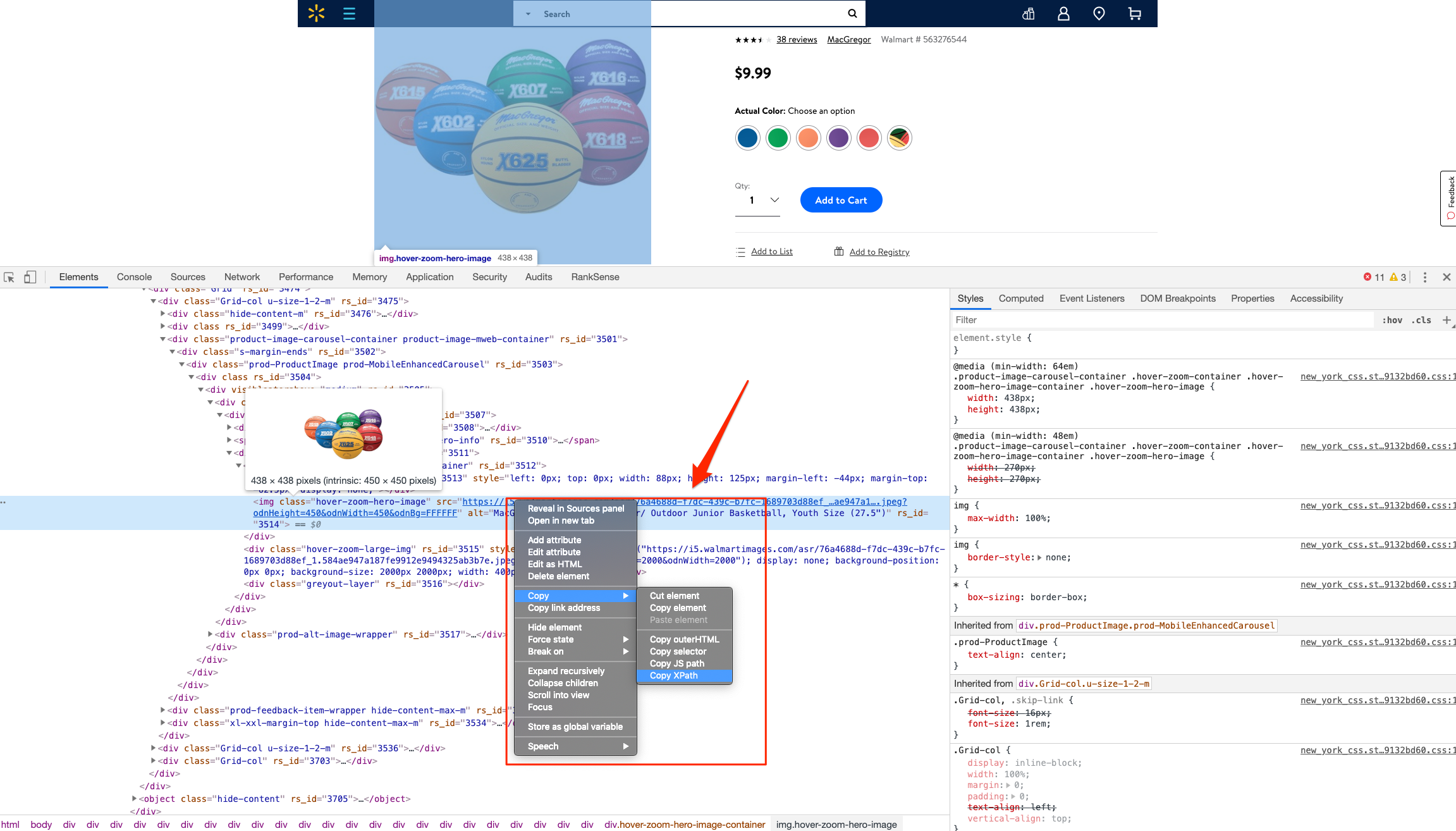

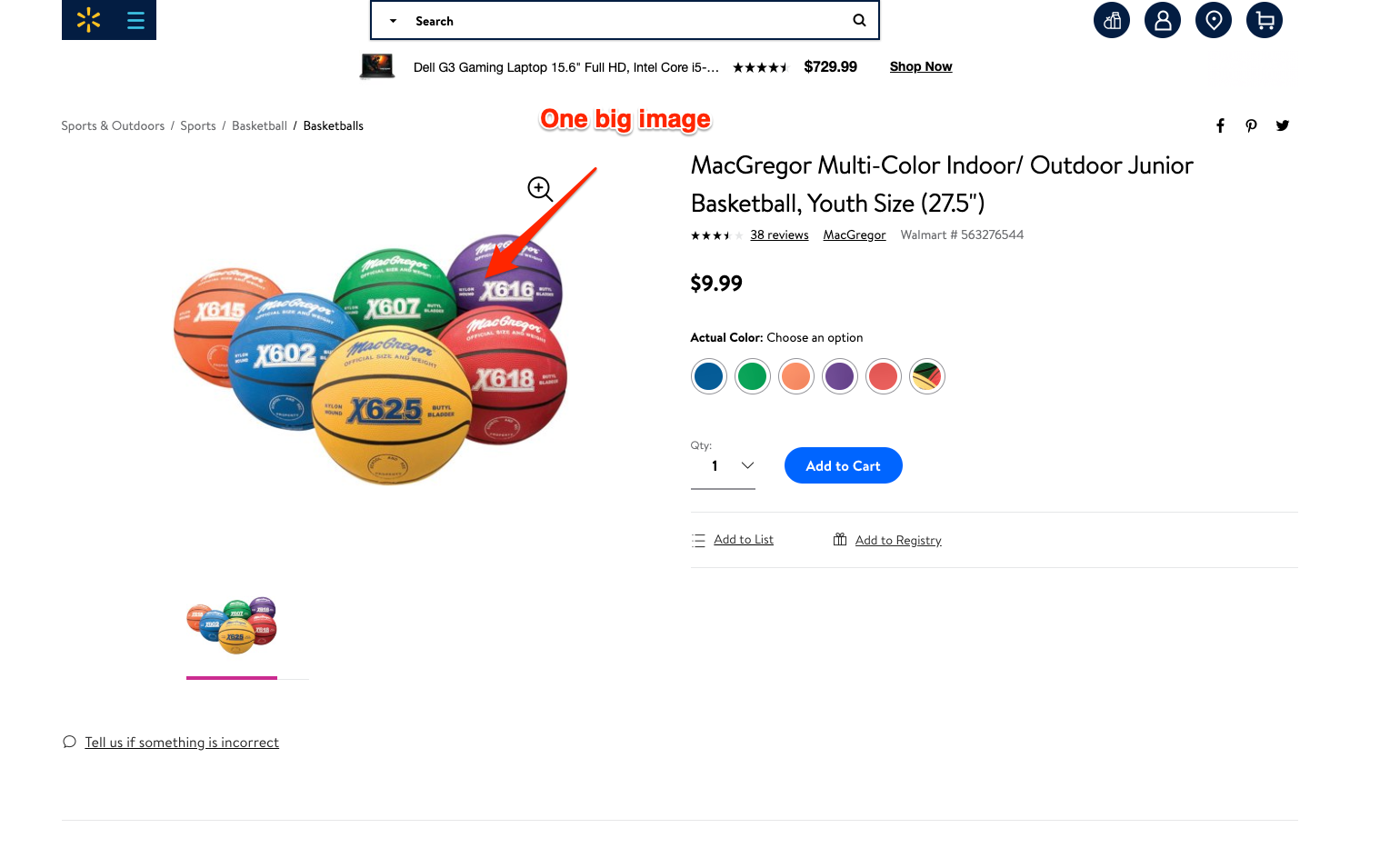

Have any queries or tips to add to these? Share them in the comments. The post Top advanced YouTube SEO tips to boost your video performance appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/04/22/top-advanced-youtube-seo-tips-to-boost-your-video-performance/ When you incorporate machine learning techniques to speed up SEO recovery, the results can be amazing. This is the third and last installment from our series on using Python to speed SEO traffic recovery. In part one, I explained how our unique approach, that we call “winners vs losers” helps us quickly narrow down the pages losing traffic to find the main reason for the drop. In part two, we improved on our initial approach to manually group pages using regular expressions, which is very useful when you have sites with thousands or millions of pages, which is typically the case with ecommerce sites. In part three, we will learn something really exciting. We will learn to automatically group pages using machine learning. As mentioned before, you can find the code used in part one, two and three in this Google Colab notebook. Let’s get started. URL matching vs content matchingWhen we grouped pages manually in part two, we benefited from the fact the URLs groups had clear patterns (collections, products, and the others) but it is often the case where there are no patterns in the URL. For example, Yahoo Stores’ sites use a flat URL structure with no directory paths. Our manual approach wouldn’t work in this case. Fortunately, it is possible to group pages by their contents because most page templates have different content structures. They serve different user needs, so that needs to be the case. How can we organize pages by their content? We can use DOM element selectors for this. We will specifically use XPaths.

For example, I can use the presence of a big product image to know the page is a product detail page. I can grab the product image address in the document (its XPath) by right-clicking on it in Chrome and choosing “Inspect,” then right-clicking to copy the XPath. We can identify other page groups by finding page elements that are unique to them. However, note that while this would allow us to group Yahoo Store-type sites, it would still be a manual process to create the groups. A scientist’s bottom-up approachIn order to group pages automatically, we need to use a statistical approach. In other words, we need to find patterns in the data that we can use to cluster similar pages together because they share similar statistics. This is a perfect problem for machine learning algorithms. BloomReach, a digital experience platform vendor, shared their machine learning solution to this problem. To summarize it, they first manually selected cleaned features from the HTML tags like class IDs, CSS style sheet names, and the others. Then, they automatically grouped pages based on the presence and variability of these features. In their tests, they achieved around 90% accuracy, which is pretty good. When you give problems like this to scientists and engineers with no domain expertise, they will generally come up with complicated, bottom-up solutions. The scientist will say, “Here is the data I have, let me try different computer science ideas I know until I find a good solution.” One of the reasons I advocate practitioners learn programming is that you can start solving problems using your domain expertise and find shortcuts like the one I will share next. Hamlet’s observation and a simpler solutionFor most ecommerce sites, most page templates include images (and input elements), and those generally change in quantity and size.

I decided to test the quantity and size of images, and the number of input elements as my features set. We were able to achieve 97.5% accuracy in our tests. This is a much simpler and effective approach for this specific problem. All of this is possible because I didn’t start with the data I could access, but with a simpler domain-level observation. I am not trying to say my approach is superior, as they have tested theirs in millions of pages and I’ve only tested this on a few thousand. My point is that as a practitioner you should learn this stuff so you can contribute your own expertise and creativity. Now let’s get to the fun part and get to code some machine learning code in Python! Collecting training dataWe need training data to build a model. This training data needs to come pre-labeled with “correct” answers so that the model can learn from the correct answers and make its own predictions on unseen data. In our case, as discussed above, we’ll use our intuition that most product pages have one or more large images on the page, and most category type pages have many smaller images on the page. What’s more, product pages typically have more form elements than category pages (for filling in quantity, color, and more). Unfortunately, crawling a web page for this data requires knowledge of web browser automation, and image manipulation, which are outside the scope of this post. Feel free to study this GitHub gist we put together to learn more. Here we load the raw data already collected. Feature engineeringEach row of the form_counts data frame above corresponds to a single URL and provides a count of both form elements, and input elements contained on that page. Meanwhile, in the img_counts data frame, each row corresponds to a single image from a particular page. Each image has an associated file size, height, and width. Pages are more than likely to have multiple images on each page, and so there are many rows corresponding to each URL. It is often the case that HTML documents don’t include explicit image dimensions. We are using a little trick to compensate for this. We are capturing the size of the image files, which would be proportional to the multiplication of the width and the length of the images. We want our image counts and image file sizes to be treated as categorical features, not numerical ones. When a numerical feature, say new visitors, increases it generally implies improvement, but we don’t want bigger images to imply improvement. A common technique to do this is called one-hot encoding. Most site pages can have an arbitrary number of images. We are going to further process our dataset by bucketing images into 50 groups. This technique is called “binning”. Here is what our processed data set looks like.

Adding ground truth labelsAs we already have correct labels from our manual regex approach, we can use them to create the correct labels to feed the model. We also need to split our dataset randomly into a training set and a test set. This allows us to train the machine learning model on one set of data, and test it on another set that it’s never seen before. We do this to prevent our model from simply “memorizing” the training data and doing terribly on new, unseen data. You can check it out at the link given below: Model training and grid searchFinally, the good stuff! All the steps above, the data collection and preparation, are generally the hardest part to code. The machine learning code is generally quite simple. We’re using the well-known Scikitlearn python library to train a number of popular models using a bunch of standard hyperparameters (settings for fine-tuning a model). Scikitlearn will run through all of them to find the best one, we simply need to feed in the X variables (our feature engineering parameters above) and the Y variables (the correct labels) to each model, and perform the .fit() function and voila! Evaluating performance

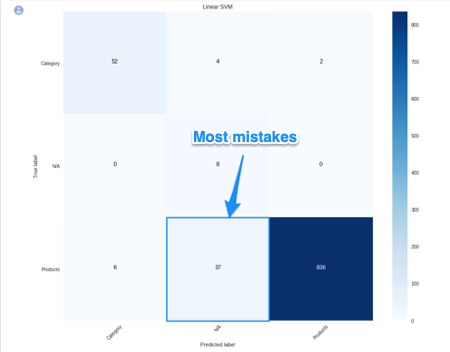

After running the grid search, we find our winning model to be the Linear SVM (0.974) and Logistic regression (0.968) coming at a close second. Even with such high accuracy, a machine learning model will make mistakes. If it doesn’t make any mistakes, then there is definitely something wrong with the code. In order to understand where the model performs best and worst, we will use another useful machine learning tool, the confusion matrix.

When looking at a confusion matrix, focus on the diagonal squares. The counts there are correct predictions and the counts outside are failures. In the confusion matrix above we can quickly see that the model does really well-labeling products, but terribly labeling pages that are not product or categories. Intuitively, we can assume that such pages would not have consistent image usage. Here is the code to put together the confusion matrix: Finally, here is the code to plot the model evaluation: Resources to learn moreYou might be thinking that this is a lot of work to just tell page groups, and you are right!

Mirko Obkircher commented in my article for part two that there is a much simpler approach, which is to have your client set up a Google Analytics data layer with the page group type. Very smart recommendation, Mirko! I am using this example for illustration purposes. What if the issue requires a deeper exploratory investigation? If you already started the analysis using Python, your creativity and knowledge are the only limits. If you want to jump onto the machine learning bandwagon, here are some resources I recommend to learn more:

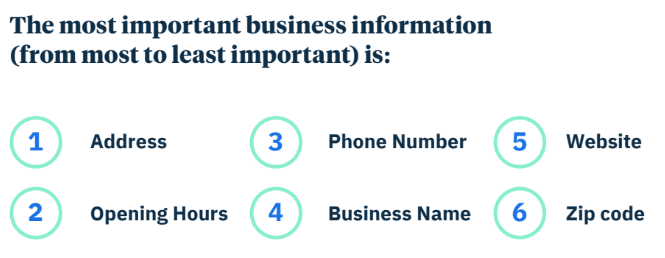

Got any tips or queries? Share it in the comments. Hamlet Batista is the CEO and founder of RankSense, an agile SEO platform for online retailers and manufacturers. He can be found on Twitter @hamletbatista. The post Using Python to recover SEO site traffic (Part three) appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/04/17/using-python-to-recover-seo-site-traffic-part-three/ “So… most businesses know about voice search. But has this knowledge helped them optimize for it?” An interesting report recently released by Uberall sought to address that exact question. For as much as we talk about the importance of voice search, and even how to optimize for it — are people actually doing it? In this report, researchers analyzed 73,000 business locations (using the Boston Metro area as their sample set), across 37 different voice search directories, as well as across SMBs, mid-market, and enterprise. They looked at a number of factors including accuracy of address, business hours, phone number, name, website, and zip code, as well as accuracy across various voice search directories. In order, this was how they weighted the importance of a listing’s information:

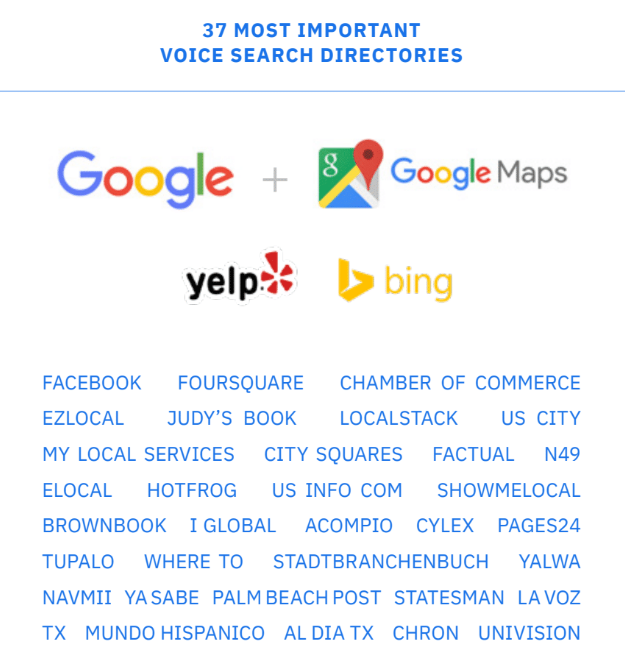

And pictured below are “the 37 most important voice search directories” that they accounted for. Uberall analysts did note, however, that Google (search + maps), Yelp, and Bing together represent about 90% of the score’s weight.

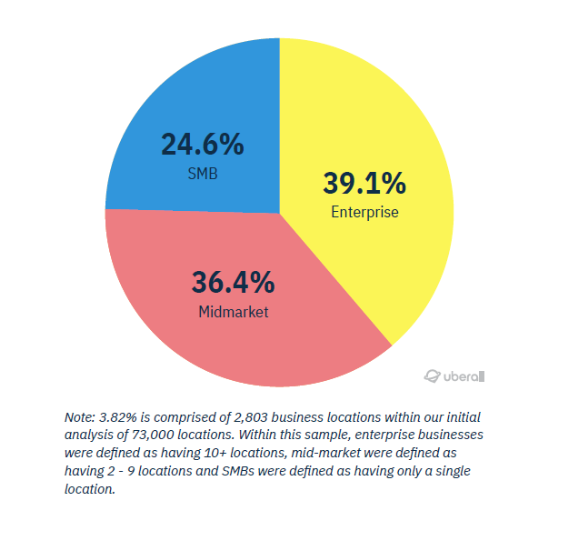

How ready are businesses for voice search?The ultimate question. Here, we’ll dive into a few key findings from this report. 1. Over 96% of all business locations fail to list their business information correctlyWhen looking just at the three primary listings locations (Google, Yelp, Bing), Uberall found that only 3.82% of business locations had no critical errors. In other words, more than 96% of all business locations failed to list their business information correctly. Breaking down those 3.82% of perfect business location listings, they were somewhat evenly split across enterprise, mid-market, and SMB, with enterprise having the largest share as one might expect.

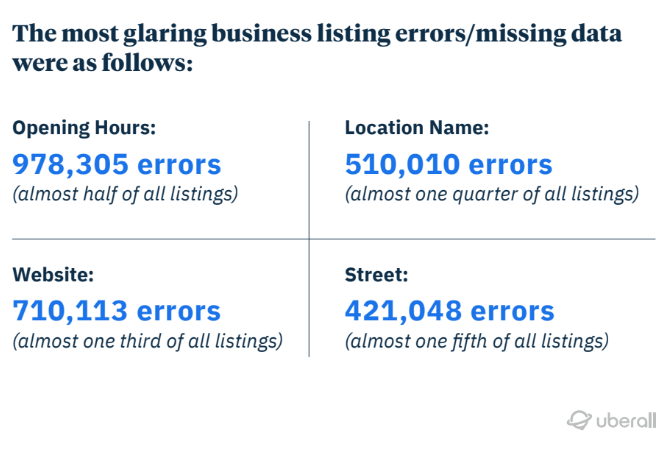

2. The four most common types of listing errorsIn their analysis, here’s the breakdown of most common types of missing or incorrect information:

3. Which types of businesses are most likely to be optimized for voice search?

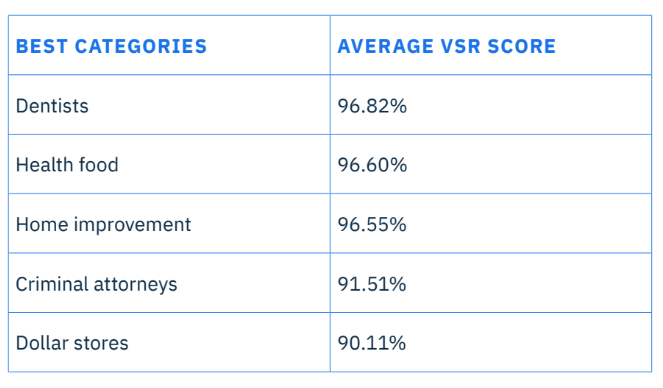

Industries that were found to be most voice search ready included:

Industries that were found to be least voice search ready included:

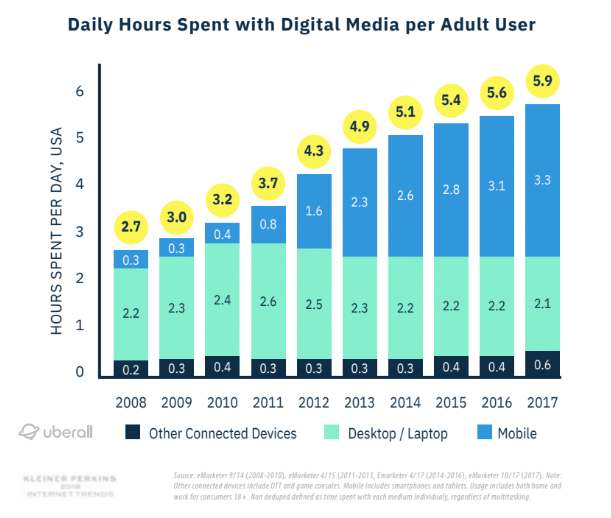

Not much surprise on the most-prepared industries relying heavily on people being able to find their physical locations. Perhaps a bit impressed that criminal attorneys landed so high on the list. Surprising that art galleries ranked second to last, but perhaps this helps explain decline in traffic of late. And as ever, we can be expectedly disappointed by the technological savvy of congressional representatives. What’s the cost of businesses not being optimized for voice search?The next question, of course, is: how much should we care? Uberall spent a nice bit of their report discussing statistics about the history of voice search, how much it’s used, and its predicted growth. Interestingly, they also take a moment to fact check the popular “voice will be 50% of all search by 2020” statistic. Apparently, this was taken from an interview with Andrew Ng (co-founder of Coursera, formerly lead at both Google Brain and Baidu) and was originally referring to the growth of a combined voice and image search, specifically via Baidu in China. 1. On average, adults spend 10x more hours on their phones than they did in 2018This data was compiled from a number of charts from eMarketer, showing overall increase in digital media use from 2008 to 2017 (and we can imagine is even higher now). Specifically, we see how most all of the growth is driven just from mobile. The connection here, of course, is that mobile devices are one of the most popular devices for voice search, second only perhaps to smart home devices.

2. About 21% of respondents were using voice search every weekAccording to this study, 21% of respondents were using voice search every week. 57% of respondents said they never used voice search. And about 14% seem to have tried it once or twice and not looked back. In general, it seems people are a bit polarized — either it’s a habit or it’s not.

Regardless, 21% is a sizable number of consumers (though we don’t have information about how many of those searches convert to purchases). And it seems the number is on the rise: the recent report from voicebot.ai showed that smart speaker ownership grew by nearly 40% from 2018 to 2019, among US adults. Overall, the cost of not being optimized for voice search may not be sky high yet. But at the same time, it’s probably never too soon to get your location listings in order and provide accurate information to consumers. You might also like:

The post Study: How ready are businesses for voice search? appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/04/18/voice-search-study-uberall/ Anyone can argue about the intent of a particular action & the outcome that is derived by it. But when the outcome is known, at some point the intent is inferred if the outcome is derived from a source of power & the outcome doesn't change. Or, put another way, if a powerful entity (government, corporation, other organization) disliked an outcome which appeared to benefit them in the short term at great lasting cost to others, they could spend resources to adjust the system. If they don't spend those resources (or, rather, spend them on lobbying rather than improving the ecosystem) then there is no desired change. The outcome is as desired. Change is unwanted.

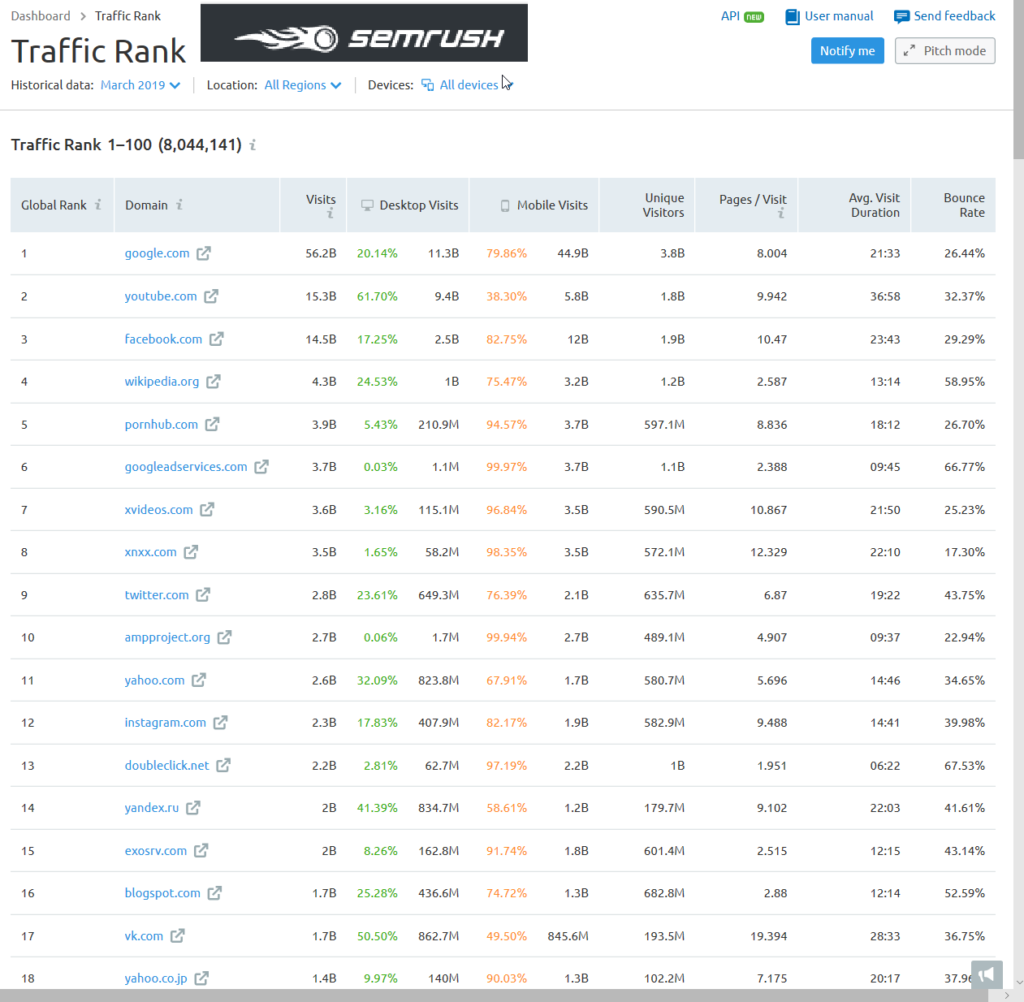

News is a stock vs flow market where the flow of recent events drives most of the traffic to articles. News that is more than a couple days old is no longer news. A news site which stops publishing news stops becoming a habit & quickly loses relevancy. Algorithmically an abandoned archive of old news articles doesn't look much different than eHow, in spite of having a much higher cost structure. According to SEMrush's traffic rank, ampproject.org gets more monthly visits than Yahoo.com.

That actually understates the prevalence of AMP because AMP is generally designed for mobile AND not all AMP-formatted content is displayed on ampproject.org. Part of how AMP was able to get widespread adoption was because in the news vertical the organic search result set was displaced by an AMP block. If you were a news site either you were so differentiated that readers would scroll past the AMP block in the search results to look for you specifically, or you adopted AMP, or you were doomed. Some news organizations like The Guardian have a team of about a dozen people reformatting their content to the duplicative & proprietary AMP format. That's wasteful, but necessary "In theory, adoption of AMP is voluntary. In reality, publishers that don’t want to see their search traffic evaporate have little choice. New data from publisher analytics firm Chartbeat shows just how much leverage Google has over publishers thanks to its dominant search engine." It seems more than a bit backward that low margin publishers are doing duplicative work to distance themselves from their own readers while improving the profit margins of monopolies. But it is what it is. And that no doubt drew the ire of many publishers across the EU. And now there are AMP Stories to eat up even more visual real estate. If you spent a bunch of money to create a highly differentiated piece of content, why would you prefer that high spend flaghship content appear on a third party website rather than your own? Google & Facebook have done such a fantastic job of eating the entire pie that some are celebrating Amazon as a prospective savior to the publishing industry. That view - IMHO - is rather suspect. Where any of the tech monopolies dominate they cram down on partners. The New York Times acquired The Wirecutter in Q4 of 2016. In Q1 of 2017 Amazon adjusted their affiliate fee schedule. Amazon generally treats consumers well, but they have been much harder on business partners with tough pricing negotiations, counterfeit protections, forced ad buying to have a high enough product rank to be able to rank organically, ad displacement of their organic search results below the fold (even for branded search queries), learning suppliers & cutting out the partners, private label products patterned after top sellers, in some cases running pop over ads for the private label products on product level pages where brands already spent money to drive traffic to the page, etc. They've made things tougher for their partners in a way that mirrors the impact Facebook & Google have had on online publishers:

Google claims they have no idea how content publishers are with the trade off between themselves & the search engine, but every quarter Alphabet publish the share of ad spend occurring on owned & operated sites versus the share spent across the broader publisher network. And in almost every quarter for over a decade straight that ratio has grown worse for publishers.

The aggregate numbers for news publishers are worse than shown above as Google is ramping up ads in video games quite hard. They've partnered with Unity & promptly took away the ability to block ads from appearing in video games using googleadsenseformobileapps.com exclusion (hello flat thumb misclicks, my name is budget & I am gone!) They will also track video game player behavior & alter game play to maximize revenues based on machine learning tied to surveillance of the user's account: "We’re bringing a new approach to monetization that combines ads and in-app purchases in one automated solution. Available today, new smart segmentation features in Google AdMob use machine learning to segment your players based on their likelihood to spend on in-app purchases. Ad units with smart segmentation will show ads only to users who are predicted not to spend on in-app purchases. Players who are predicted to spend will see no ads, and can simply continue playing." And how does the growth of ampproject.org square against the following wisdom?

Literally only yesterday did Google begin supporting instant loading of self-hosted AMP pages. China has a different set of tech leaders than the United States. Baidu, Alibaba, Tencent (BAT) instead of Facebook, Amazon, Apple, Netflix, Google (FANG). China tech companies may have won their domestic markets in part based on superior technology or better knowledge of the local culture, though those same companies have largely went nowhere fast in most foreign markets. A big part of winning was governmental assistance in putting a foot on the scales. Part of the US-China trade war is about who controls the virtual "seas" upon which value flows:

China maintains Huawei is an employee-owned company. But that proposition is suspect. Broadly stealing technology is vital to the growth of the Chinese economy & they have no incentive to stop unless their leading companies pay a direct cost. Meanwhile, China is investigating Ericsson over licensing technology. India has taken notice of the success of Chinese tech companies & thus began to promote "national champion" company policies. That, in turn, has also meant some of the Chinese-styled laws requiring localized data, antitrust inquiries, foreign ownership restrictions, requirements for platforms to not sell their own goods, promoting limits on data encryption, etc.

Amazon vowed to invest $5 billion in India & they have done some remarkable work on logistics there. Walmart acquired Flipkart for $16 billion. Other emerging markets also have many local ecommerce leaders like Jumia, MercadoLibre, OLX, Gumtree, Takealot, Konga, Kilimall, BidOrBuy, Tokopedia, Bukalapak, Shoppee, Lazada. If you live in the US you may have never heard of *any* of those companies. And if you live in an emerging market you may have never interacted with Amazon or eBay. It makes sense that ecommerce leadership would be more localized since it requires moving things in the physical economy, dealing with local currencies, managing inventory, shipping goods, etc. whereas information flows are just bits floating on a fiber optic cable. If the Internet is primarily seen as a communications platform it is easy for people in some emerging markets to think Facebook is the Internet. Free communication with friends and family members is a compelling offer & as the cost of data drops web usage increases. At the same time, the web is incredibly deflationary. Every free form of entertainment which consumes time is time that is not spent consuming something else. Add the technological disruption to the wealth polarization that happened in the wake of the great recession, then combine that with algorithms that promote extremist views & it is clearly causing increasing conflict. If you are a parent and you think you child has no shot at a brighter future than your own life it is easy to be full of rage. Empathy can radicalize otherwise normal people by giving them a more polarized view of the world:

A complete lack of empathy could allow a psychopath (hi Chris!) to commit extreme crimes while feeling no guilt, shame or remorse. Extreme empathy can have the same sort of outcome:

News feeds will be read. Villages will be razed. Lynch mobs will become commonplace. Many people will end up murdered by algorithmically generated empathy. As technology increases absentee ownership & financial leverage, a society led by morally agnostic algorithms is not going to become more egalitarian.

When politicians throw fuel on the fire it only gets worse:

Mark Zuckerburg won't get caught downstream from platform blowback as he spends $20 million a year on his security. The web is a mirror. Engagement-based algorithms reinforcing our perceptions & identities. And every important story has at least 2 sides!

Some may "learn" vaccines don't work. Others may learn the vaccines their own children took did not work, as it failed to protect them from the antivax content spread by Facebook & Google, absorbed by people spreading measles & Medieval diseases. Passion drives engagement, which drives algorithmic distribution: "There’s an asymmetry of passion at work. Which is to say, there’s very little counter-content to surface because it simply doesn’t occur to regular people (or, in this case, actual medical experts) that there’s a need to produce counter-content." As the costs of "free" become harder to hide, social media companies which currently sell emerging markets as their next big growth area will end up having embedded regulatory compliance costs which will end up exceeding any sort of prospective revenue they could hope to generate. The Pinterest S1 shows almost all their growth is in emerging markets, yet almost all their revenue is inside the United States. As governments around the world see the real-world cost of the foreign tech companies & view some of them as piggy banks, eventually the likes of Facebook or Google will pull out of a variety of markets they no longer feel worth serving. It will be like Google did in mainland China with search after discovering pervasive hacking of activist Gmail accounts.

Lower friction & lower cost information markets will face more junk fees, hurdles & even some legitimate regulations. Information markets will start to behave more like physical goods markets. The tech companies presume they will be able to use satellites, drones & balloons to beam in Internet while avoiding messy local issues tied to real world infrastructure, but when a local wealthy player is betting against them they'll probably end up losing those markets: "One of the biggest cheerleaders for the new rules was Reliance Jio, a fast-growing mobile phone company controlled by Mukesh Ambani, India’s richest industrialist. Mr. Ambani, an ally of Mr. Modi, has made no secret of his plans to turn Reliance Jio into an all-purpose information service that offers streaming video and music, messaging, money transfer, online shopping, and home broadband services." Publishers do not have "their mojo back" because the tech companies have been so good to them, but rather because the tech companies have been so aggressive that they've earned so much blowback which will in turn lead publishers to opting out of future deals, which will eventually lead more people back to the trusted brands of yesterday. Publishers feeling guilty about taking advertorial money from the tech companies to spread their propaganda will offset its publication with opinion pieces pointing in the other direction: "This is a lobbying campaign in which buying the good opinion of news brands is clearly important. If it was about reaching a target audience, there are plenty of metrics to suggest his words would reach further – at no cost – on Facebook. Similarly, Google is upping its presence in a less obvious manner via assorted media initiatives on both sides of the Atlantic. Its more direct approach to funding journalism seems to have the desired effect of making all media organisations (and indeed many academic institutions) touched by its money slightly less questioning and critical of its motives." When Facebook goes down direct visits to leading news brand sites go up. When Google penalizes a no-name me-too site almost nobody realizes it is missing. But if a big publisher opts out of the ecosystem people will notice. The reliance on the tech platforms is largely a mirage. If enough key players were to opt out at the same time people would quickly reorient their information consumption habits. If the platforms can change their focus overnight then why can't publishers band together & choose to dump them?

In Europe there is GDPR, which aimed to protect user privacy, but ultimately acted as a tax on innovation by local startups while being a subsidy to the big online ad networks. They also have Article 11 & Article 13, which passed in spite of Google's best efforts on the scaremongering anti-SERP tests, lobbying & propaganda fronts: "Google has sparked criticism by encouraging news publishers participating in its Digital News Initiative to lobby against proposed changes to EU copyright law at a time when the beleaguered sector is increasingly turning to the search giant for help." Remember the Eric Schmidt comment about how brands are how you sort out (the non-YouTube portion of) the cesspool? As it turns out, he was allegedly wrong as Google claims they have been fighting for the little guy the whole time:

Facebook claims there is a local news problem: "Facebook Inc. has been looking to boost its local-news offerings since a 2017 survey showed most of its users were clamoring for more. It has run into a problem: There simply isn’t enough local news in vast swaths of the country. ... more than one in five newspapers have closed in the past decade and a half, leaving half the counties in the nation with just one newspaper, and 200 counties with no newspaper at all." Google is so for the little guy that for their local news experiments they've partnered with a private equity backed newspaper roll up firm & another newspaper chain which did overpriced acquisitions & is trying to act like a PE firm (trying to not get eaten by the PE firm).

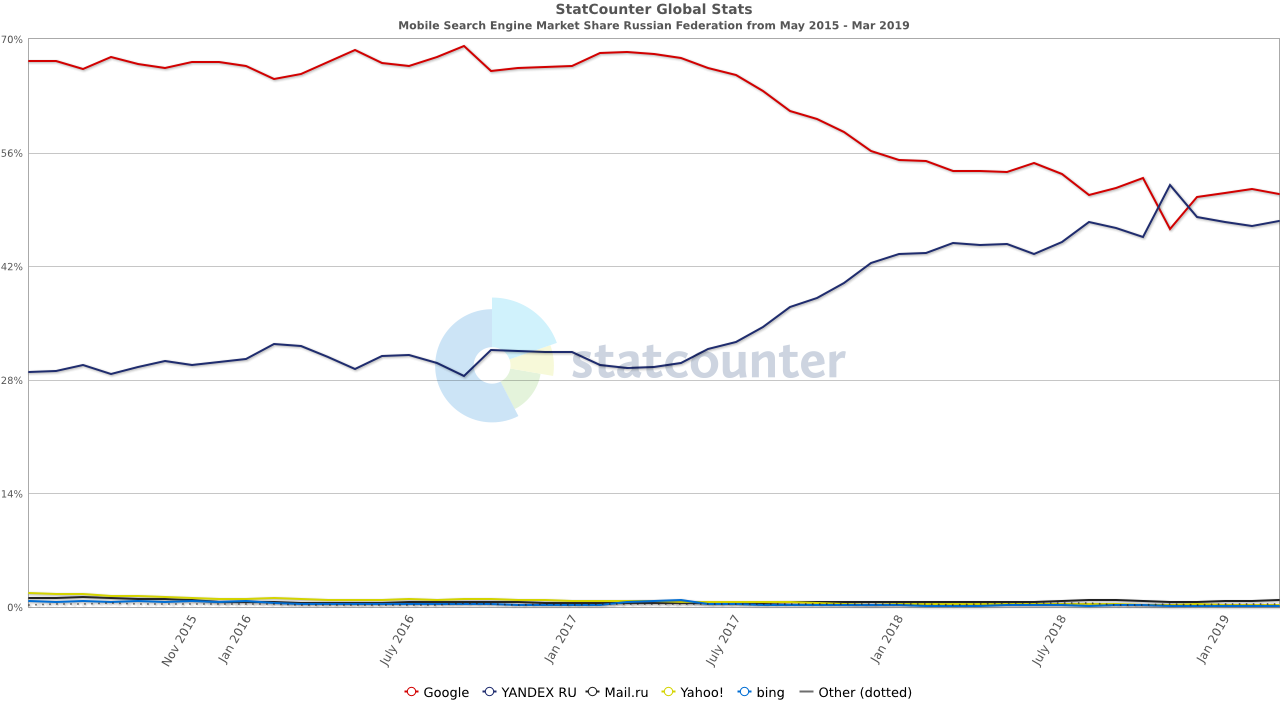

Does the above stock chart look in any way healthy? Does it give off the scent of a firm that understood the impact of digital & rode it to new heights? If you want good market-based outcomes, why not partner with journalists directly versus operating through PE chop shops? If Patch is profitable & Google were a neutral ranking system based on quality, couldn't Google partner with journalists directly? Throwing a few dollars at a PE firm in some nebulous partnership sure beats the sort of regulations coming out of the EU. And the EU's regulations (and prior link tax attempts) are in addition to the three multi billion Euro fines the European Union has levied against Alphabet for shopping search, Android & AdSense. Google was also fined in Russia over Android bundling. The fine was tiny, but after consumers gained a search engine choice screen (much like Google pushed for in Europe on Microsoft years ago) Yandex's share of mobile search grew quickly.

The UK recently published a white paper on online harms. In some ways it is a regulation just like the tech companies might offer to participants in their ecosystems:

If web publishers should monitor inbound links to look for anything suspicious then the big platforms sure as hell have the resources & profit margins to monitor behavior on their own websites. Australia passed the Sharing of Abhorrent Violent Material bill which requires platforms to expeditiously remove violent videos & notify the Australian police about them. There are other layers of fracturing going on in the web as well. Programmatic advertising shifted revenue from publishers to adtech companies & the largest ad sellers. Ad blockers further lower the ad revenues of many publishers. If you routinely use an ad blocker, try surfing the web for a while without one & you will notice layover welcome AdSense ads on sites as you browse the web - the very type of ad they were allegedly against when promoting AMP.

Tracking protection in browsers & ad blocking features built directly into browsers leave publishers more uncertain. And who even knows who visited an AMP page hosted on a third party server, particularly when things like GDPR are mixed in? Those who lack first party data may end up having to make large acquisitions to stay relevant. Voice search & personal assistants are now ad channels.

App stores are removing VPNs in China, removing Tiktok in India, and keeping female tracking apps in Saudi Arabia. App stores are centralized chokepoints for governments. Every centralized service is at risk of censorship. Web browsers from key state-connected players can also censor messages spread by developers on platforms like GitHub. Microsoft's newest Edge web browser is based on Chromium, the source of Google Chrome. While Mozilla Firefox gets most of their revenue from a search deal with Google, Google has still went out of its way to use its services to both promote Chrome with pop overs AND break in competing web browsers:

As phone sales fall & app downloads stall a hardware company like Apple is pushing hard into services while quietly raking in utterly fantastic ad revenues from search & ads in their app store. Part of the reason people are downloading fewer apps is so many apps require registration as soon as they are opened, or only let a user engage with them for seconds before pushing aggressive upsells. And then many apps which were formerly one-off purchases are becoming subscription plays. As traffic acquisition costs have jumped, many apps must engage in sleight of hand behaviors (free but not really, we are collecting data totally unrelated to the purpose of our app & oops we sold your data, etc.) in order to get the numbers to back out. This in turn causes app stores to slow down app reviews. Apple acquired the news subscription service Texture & turned it into Apple News Plus. Not only is Apple keeping half the subscription revenues, but soon the service will only work for people using Apple devices, leaving nearly 100,000 other subscribers out in the cold: "if you’re part of the 30% who used Texture to get your favorite magazines digitally on Android or Windows devices, you will soon be out of luck. Only Apple iOS devices will be able to access the 300 magazines available from publishers. At the time of the sale in March 2018 to Apple, Texture had about 240,000 subscribers." Apple is also going to spend over a half-billion Dollars exclusively licensing independently developed games:

Verizon wants to launch a video game streaming service. It will probably be almost as successful as their Go90 OTT service was. Microsoft is pushing to make Xbox games work on Android devices. Amazon is developing a game streaming service to compliment Twitch.

Google, having a bit of Twitch envy, is also launching a video game streaming service which will be deeply integrated into YouTube: "With Stadia, YouTube watchers can press “Play now” at the end of a video, and be brought into the game within 5 seconds. The service provides “instant access” via button or link, just like any other piece of content on the web." Google will also launch their own game studio making exclusive games for their platform. When consoles don't use discs or cartridges so they can sell a subscription access to their software library it is hard to be a game retailer! GameStop's stock has been performing like an ICO. And these sorts of announcements from the tech companies have been hitting stock prices for companies like Nintendo & Sony: “There is no doubt this service makes life even more difficult for established platforms,” Amir Anvarzadeh, a market strategist at Asymmetric Advisors Pte, said in a note to clients. “Google will help further fragment the gaming market which is already coming under pressure by big games which have adopted the mobile gaming business model of giving the titles away for free in hope of generating in-game content sales.” The big tech companies which promoted everything in adjacent markets being free are now erecting paywalls for themselves, balkanizing the web by paying for exclusives to drive their bundled subscriptions. How many paid movie streaming services will the web have by the end of next year? 20? 50? Does anybody know? Disney alone with operate Disney+, ESPN+ as well as Hulu. And then the tech companies are not only licensing exclusives to drive their subscription-based services, but we're going to see more exclusionary policies like YouTube not working on Amazon Echo, Netflix dumping support for Apple's Airplay, or Amazon refusing to sell devices like Chromecast or Apple TV. The good news in a fractured web is a broader publishing industry that contains many micro markets will have many opportunities embedded in it. A Facebook pivot away from games toward news, or a pivot away from news toward video won't kill third party publishers who have a more diverse traffic profile and more direct revenues. And a regional law blocking porn or gambling websites might lead to an increase in demand for VPNs or free to play points-based games with paid upgrades. Even the rise of metered paywalls will lead to people using more web browsers & more VPNs. Each fracture (good or bad) will create more market edges & ultimately more opportunities. Chinese enforcement of their gambling laws created a real estate boom in Manila. So long as there are 4 or 5 game stores, 4 or 5 movie streaming sites, etc. ... they have to compete on merit or use money to try to buy exclusives. Either way is better than the old monopoly strategy of take it or leave it ultimatums. The publisher wins because there is a competitive bid. There won't be an arbitrary 30% tax on everything. So long as there is competition from the open web there will be means to bypass the junk fees & the most successful companies that do so might create their own stores with a lower rate: "Mr. Schachter estimates that Apple and Google could see a hit of about 14% to pretax earnings if they reduced their own app commissions to match Epic’s take." As the big media companies & big tech companies race to create subscription products they'll spend many billions on exclusives. And they will be training consumers that there's nothing wrong with paying for content. This will eventually lead to hundreds of thousands or even millions of successful niche publications which have incentives better aligned than all the issues the ad supported web has faced.

Categories:

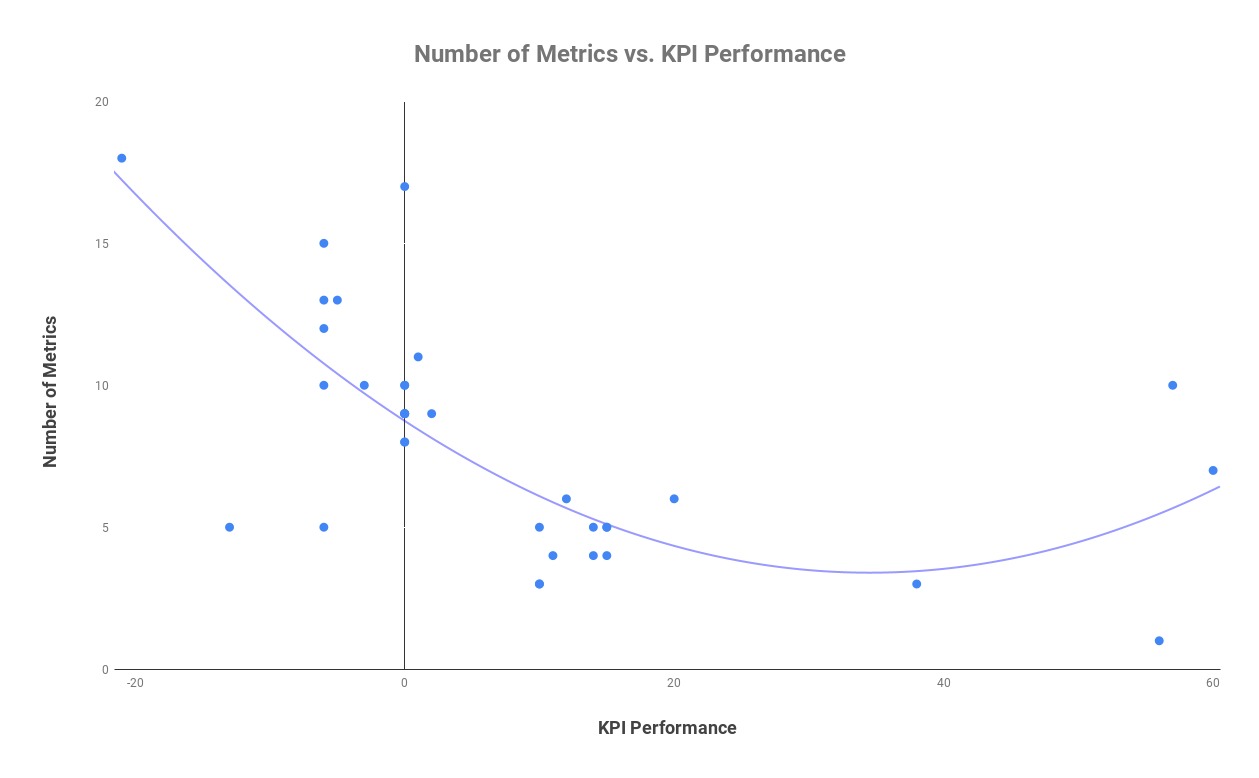

from http://www.seobook.com/fractured One of the most overused phrases in content marketing is how it is an ever-changing landscape, forcing agencies and marketers to adapt and improve their existing processes. In a short space of time, a topic can go from being newsworthy to negligible, all while certain types of content become tedious to the press and its readers. A vast amount of the work we do — at Kaizen and many other similar agencies — is create content with the sole purpose of building high authority links, making it all the more imperative that we are conscious of the changes and trends outlined above. If we were to split the creative process into three sections — content, design, and outreach strategy — how are we able to engineer our own successes and failures to provide us with a framework for future campaigns? Three important factors for producing link-worthy contentOver the past month, I’ve analyzed over 120 pieces of content across 16 industries to locate and define the common threads between campaigns that exceed or fall short of their expectations. From the amount of data used and visualized to the importance of effective headline storytelling, the insight is a way of both rationalizing and reshaping our approach to content production. 1. Not too much data — our study showed an average of just over five metricsBehind every great piece of content is (usually) a unique or noteworthy set of data. Both static and interactive content enables us to display limitless amounts of research which provide the origins of the stories we try to communicate. However many figures or metrics you choose to visualize, there is always a point where a journalist or reader switches off. This glass ceiling is difficult to pinpoint and depends on the type of content, and the industry or readership you’re looking to appeal to, but a more granular study of good and poor performing campaigns that I performed suggested some benefits of refining data sets. ObservationsA starting point for any piece of research is the individual metrics, whether it is cost, type, or essentially anything worth measuring and comparing. In my research, in the content campaigns that exceed our typical KPI, there was an average of just over 5 metrics used on each piece compared to almost double in campaigns with either a normal or below satisfactory performance. The graph below shows the correlation between a lower number of metrics and a higher link performance.

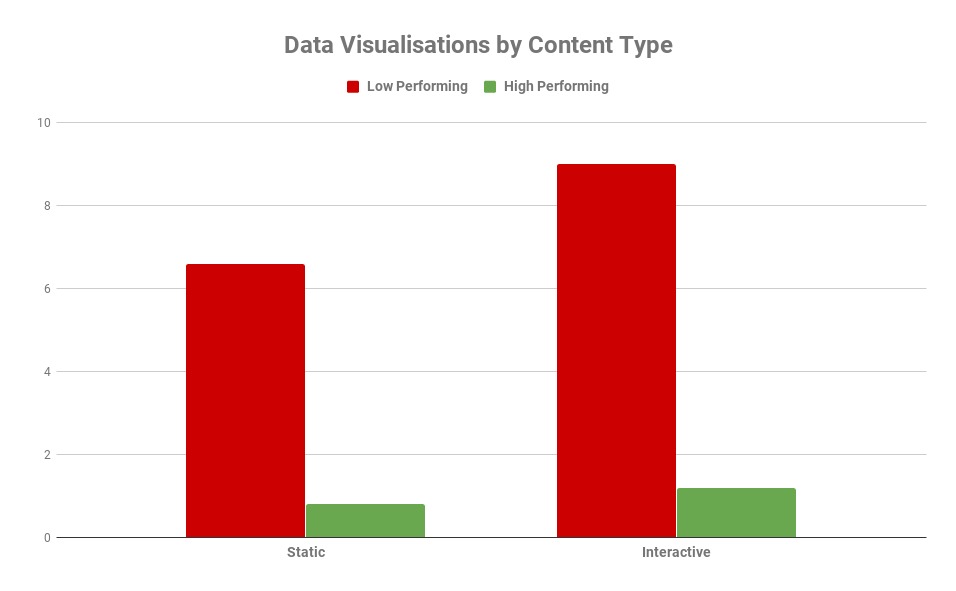

An example of these findings in practice can be found in an infographic study completed for online travel retailer Lastminute.com that sought to find the world’s most chilled out countries. Following a comprehensive study of 36 countries across 10 metrics, the task was to refine these figures in a way that can be translated well through its design. The number of countries was whittled down to just the top 15, and the metrics were condensed to have four indexes which the rankings were based on. The decision to not showcase the data in its entirety proved fruitful, securing over 50 links, covered by the Mail Online and Lonely Planet. As an individual who very much enjoys partaking in the research process, it can be extremely difficult to sacrifice any element of your work, but it is that level of tact in the production of content that distinguishes one piece from another. 2. Simple, powerful data visualizations — our analysis showed highest achievers had just one visualizationRegardless of how saturated the content marketing industry becomes, we are graced every year with new and innovative ways of visualizing data. The balancing act between originality in your design and an unnecessarily complex data-visualization is often the point on which success and failure can pivot. As is the case with data, overloading a piece of content with an amass of multi-faceted graphs and charts is a surefire way of alienating your users, leaving them either bored or confused. ObservationsFor my study, I decided to look at the content that contained data visualizations that failed to hit the mark and see whether the quality is as much of a problem as quantity in terms of design. As I carried out the analysis, I denoted the two examples where one visual would incorporate most or all of the study, or the same illustration was replicated several times for a country, region or sector. For instance, this study, from medical travel insurance provider Get Going, on reliable airlines condenses all the key information into one single data-visualization. Conversely, this piece from The Guardian on the gender pay gap shows how it can be effective to use one visual several times to present your data. Unsurprisingly, many of the low scorers in my research averaged around eight different forms of data visualizations while high achievers contained just one. The graph below showcases how many data-visualizations are used on average by high and low performing pieces, both static and interactive. Low performing static examples contained an average of just over six, with less than one for their higher-scoring counterparts. For interactive content, the optimum is just over one with poor performing content containing almost nine per piece.

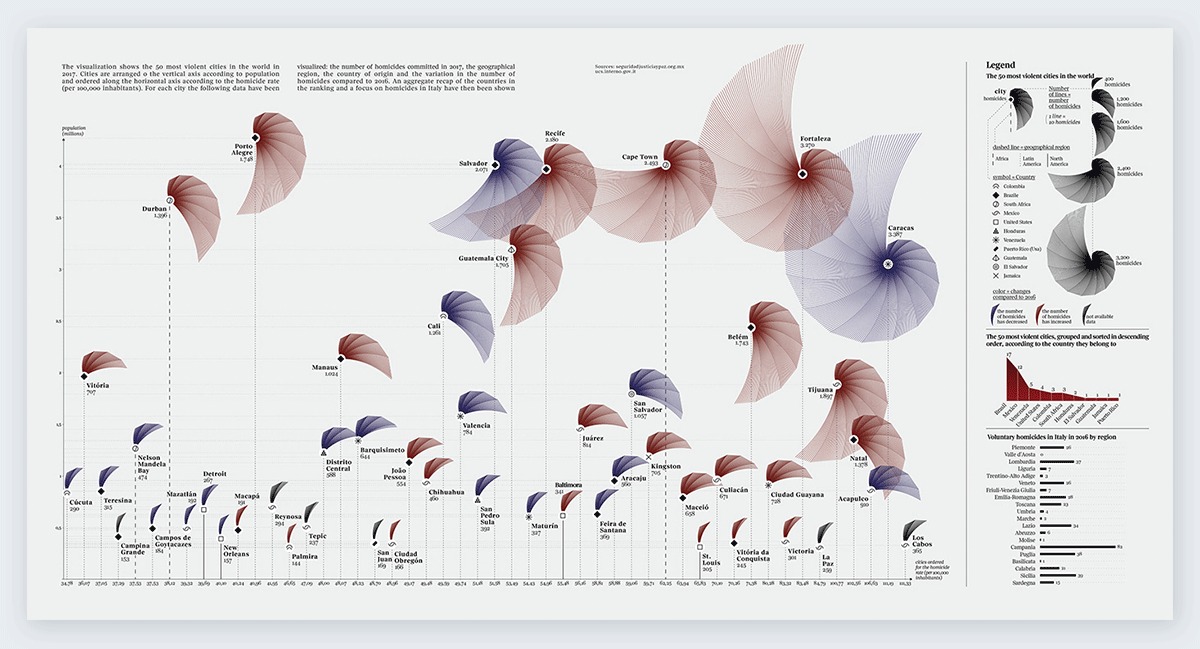

In examples where the same type of graph or chart was used repeatedly, poor performers had approximately 33 per piece, with their more favorable counterparts using just three. It is important to note that ranking-based pieces often require the repetition of a visual in order to tell a story, but once again this is part of the balancing act for creatives in terms of what type and how many data-visualizations one utilizes. A fine example of an effective illustration of the data study contained in one visual comes from a 2017 piece by Federica Fragapane for Italian publication La Lettura, showcasing the most violent cities in the world. The chart depicts each city as a shape sized by its homicide rate, with other small indicators defined in the legend to the right of the graphic. The aesthetic qualities of the graph give a campaign, fairly morbid in the topic, an extended appeal beyond the subject of just global crime. While the term “design-led” is so-often thrown around, this example proves how effective it can be to integrate visuals effectively through your data. The piece, produced originally for print, proved hugely successful in the design space, with 18 referring domains from sites such as Visme.co.

3. Pandering to the press — over a third of our published links used the same headline as our pitch email subject lineKaizen produces hundreds of campaigns on a yearly basis across a range of industries, meaning the task of looking inward is as necessary today as it ever has been. Competition means that press contacts are looking for something extra special to warrant your content’s publication. While ingenuity is required in every area of content marketing, it’s equally important to recognize the importance of getting the basics right. The task of outreach can be won and lost in several ways, but your subject line is, and will always be, the most significant component of your pitch. Whether you encapsulate your content in a single sentence or highlight your most attention-worthy finding, an email headline is a laborious but crucial task. My task through my research was to find how vital it is in terms of the end result of achieving coverage. ObservationsAs part of my analysis, I recorded the backlinks of a sample of our high and average content and recorded the headlines used in the coverage for each campaign. I found in better-performing examples, over a third of links used the same headlines used in our pitch emails, emphasizing the importance of effective storytelling in every area of your PR process. Below is an illustration in the SERPs of how far an effective headline can take you, with example coverage from one of our most successful pieces for TotallyMoney on work/life balance in Europe.

Another area I was keen to investigate, given the time and effort that goes into it, is how press releases are used across the coverage we get. Using scraping software, I was able to pull out the copy from each article where a follow link was achieved and compare it to the press releases we have produced. It was pleasing to see that one in five links contained at least a paragraph of copy used in our press materials. In contrast, just seven percent of the coverage within the lower performing campaigns contained a reference to our press releases, and an even lower four percent using headlines from our email subject lines. Final thoughtsThese correlations, similar to the ones discussed previously, suggest not only how vital the execution of basic processes are, but serve as a reminder that a campaign can do well or fall down at so many different points of production. For marketers, analysis of this nature indicates that a refinement of creative operations is a more secure route for your content and its coverage. Don’t think of it as “less is more” but a case of picking the right tools for the job at hand. Nathan Abbott is Content Manager at Kaizen. The post Three fundamental factors in the production of link-building content appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/04/15/three-fundamental-factors-in-the-production-of-link-building-content/ Instagram is a phenomenon of our time. The photo-sharing app has 7.7 billion users by now (and counting). One billion people use Instagram every month and 500 million use the platform every day. Its engagement is also 10 times higher than that of Facebook, 54 times higher than Pinterest’s, and 84 times higher than Twitter’s. All kinds of businesses ranging from your teen neighbor making earrings to huge corporations and media are on Instagram. And for a good reason — 80% of Instagram accounts follow at least one business.

[Screenshot taken from the Instagram Business homepage] At times when Facebook is becoming more and more Messenger-based and Twitter revolves around politics and social issues, Instagram stands to be the platform for friends, strangers, and brands alike. It’s no surprise we’re so serious about Instagram marketing and the tools that help us with it. Below is the list of such tools which covers everything from filters to analytics. 19 top Instagram marketing tools1. GrumGrum is a scheduling tool that lets you publish content (both photos and videos) on Instagram. You can publish from multiple accounts at the same time and tag the users. You can do that right from your desktop. Price: Starts at $9.9/month. Offers a free trial for 3 days. 2. AwarioAwario is a social media monitoring tool that finds mentions of your brand (or any other keyword) across the web, news/blogs, and social media platforms, including Instagram. By analyzing mentions of your brand on the platform, it tells you who your brand advocates and who the industry influencers are, what the sentiment behind your brand (positive, negative, or neutral) is, as well as the languages and locations of your audience. It also analyzes the growth and reach of your mentions, and tells you how you compare to your competitors. Price: Starts at $29/month. Offers a free trial for 14 days. 3. BufferBuffer is another scheduling tool. However, it includes Instagram among other social networks rather than focusing on Instagram alone. With Buffer, you can schedule content to be published across Instagram, Facebook, Twitter, Pinterest, and LinkedIn. You can publish the same or different messages across different platforms. You can also review how your posts are performing in terms of engagement, impressions, and clicks. The tool can be used by up to 25 team members, and you can assign them the appropriate access levels. Price: Starts at $15/month. Offers free 7-day or 14-day trials depending on the plan. 4. Hashtags for likesHashtags for likes is a simple tool that suggests you the most trending relevant hashtags. Knowing the most popular hashtags in real time helps brands keep up with trends, bandwagon on the news, and ultimately grow followers. Price: $9.99/month. 5. IconosquareIconosquare is a social media analytics tool that works for Instagram and Facebook. It shows you the metrics on content performance and engagement as well as on your followers. You’ll discover the best times to post and understand your followers better. The tool also analyzes Instagram Stories. Besides analytics, you can schedule posts, monitor tags and comments about your brand. Price: Starts at $39/month. A free 14-day trial is available. 6. CanvaCanva is a design tool that is a great fit for marketers and companies that don’t have an in-house designer. Among other things, Canva helps create perfect Instagram stories. Stylish templates and easy design tools ensure that your Story stands out, which, again, isn’t easy in the world of Instagram. Price: Free 7. ShortstackShortstack is a tool to run Instagram contests. Contests are huge on this platform, they cause loads of buzz, increase brand awareness, and attract new followers. They are a practice loved by marketers. ShortStack gathers all user-generated content, such as images that have been posted on your content hashtag, and displays them. It also keeps track of your campaign’s performance, showing your traffic, engagement, and other valuable data. Price: Free up to 100 entries. Paid plans start at $29/month. 8. SoldsieSoldsie is a handy tool that helps you to sell on Instagram and Facebook using comments. All you have to do is upload a product picture with relevant product information. Users who are registered with Soldsie can simply comment on the photo, and Soldsie will turn that into a transaction. More expensive Soldsie plans are also integrated with Shopify. Price: Starts at $49/monthly and 5.9% transaction fee. 9. Social RankSocial Rank is a tool that identifies and analyzes your audience. You can identify influencers among your followers, see who engages with your brand and with what frequency. You can sort your followers in lists that are easy to work with (for example: most valuable, most engaged, and others). You can also filter your audience by bio keyword, word/hashtag, and geographic location. Price: Available on request. 10. PlannPlann is an Instagram social media management tool. It allows you to design, edit, schedule, and analyze your posts. For example, you can edit the Instagram grid to look just as you wish. You can rearrange, organize, crop, and schedule your Instagram Stories. All exciting stats, from best times to post and best-performing hashtags to your best-performing color schemes are available. And you can also collaborate with other marketers to run your Instagram account together. Price: Free, paid plans start from $6/month. 11. Social InsightsSocial Insights is another platform that offers many important Instagram marketing features, such as scheduling and posting from your computer, identifying and organizing your followers, and analyzing followers’ growth, interactions, and engagement. You can add other team members without sharing your Instagram login. Price: Starts at $29/month. A free 14-day trial is available. 12. Instagram Ads by MailchimpIf you’re already using MailChimp, its Instagram Ads feature might come in handy. The tool lets you use MailChimp contact lists to create Instagram campaigns. The whole process (creating, buying, and tracking results of your ads) is, therefore, in the familiar place and powered by data. Price: No extra fees if you’re using MailChimp. 13. Unfold – Story CreatorUnfold – Story Creator is an iOS app that makes lifestyle, fashion, and travel content more professional-looking. The app offers stylish templates, advanced fonts and text tools, and exports your stories in high resolution so that you can share them to other platforms besides Instagram. Price: Free 14. PicodashPicodash is an Instagram tool that finds target audiences and influencers on the platform. It lets you export your and your competitors’ Instagram followers and following lists, users that have used a specific hashtag, posted at a specific location or venue, commented or liked a specific post, as well as tagged users. You can also download any account stories or highlighted stories. Price: Starts from $10 for a Followers/Hashtag Posts export. You can also request a sample of 100 for free before you order a full export report. 15. WyngWyng is an enterprise-level platform that finds user-generated content with a specific mention or hashtag, exports it, and gets the rights to this content. This is very helpful for running contests. Instagram is, however, a tiny fraction of what the tool covers. Price: Available on request. A free 14-day trial is available. 16. AfterlightAfterlight is the iOS/Android image editing app that makes your content look more professional and refined. It offers plenty of unique filters, natural effects, and frames. Price: $2.99 17. SendibleSendible is a popular social media management platform that lets you run accounts on different social media platforms, including Instagram. It’s integrated with some other tools that are useful for Instagram, such as Canva. The tool does scheduling, monitors mentions, and tracks the performance of your Instagram posts. You can also team up with other marketers and work together on your Instagram marketing (and other) goals. Price: Starts at $29/month. A free 14-day trial is available. 18. OlapicOlapic is an advanced visual commerce platform. It collects user-generated video content in real time, publishes it to your social media channels (including Instagram) makes it shoppable, measures and predicts which content will perform best. It goes far beyond Instagram and even social media. What is more, it obtains rights for the content for you so that you’re able to use it across your advertising, email, and offline channels. Price: Available on request. 19. PabloPablo (made by Buffer) is a platform that lets you easily create beautiful images for your Instagram marketing purposes. You can choose photos from Pablo’s own library which includes more than 500,000 images, add text (25+ stylish fonts are available) and format. The resizing option for various social platforms, including Instagram, will ensure your image fits perfectly. Price: Free ConclusionAs you can see, there’re plenty of tools to choose from. Check them out, spot the ones that you need, and take your Instagram marketing to a whole new level. Aleh is the Founder and CMO at SEO PowerSuite and Awario. He can be found on Twitter at @ab80. Read next:

The post Top 19 Instagram marketing tools to use for success appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/04/15/top-nineteen-instagram-marketing-tools-to-use-for-success/ There have been an abundance of hand-wringing articles published that wonder if the era of the phone call is over, not to mention speculation that millennials would give up the option to make a phone call altogether if it meant unlimited data. But actually, the rise of direct dialing through voice assistants and click to call buttons for mobile search means that calls are now totally intertwined with online activity. Calling versus buying online is no longer an either/or proposition. When it comes to complicated purchases like insurance, healthcare, and mortgages, the need for human help is even more pronounced. Over half of consumers prefer to talk to an agent on the phone in these high-stakes situations. In fact, 70% of consumers have used a click to call button. And three times as many people prefer speaking with a live human over a tedious web form. And calls aren’t just great for consumers either. A recent study by Invoca found that calls actually convert at ten times the rate of clicks. However, if you’re finding that your business line isn’t ringing quite as often as you’d like it to, here are some surefire ways to optimize your search ads to drive more high-value phone calls. Content produced in collaboration with Invoca. Four ways to optimize your paid search ads for more phone calls

If you’re waiting for the phone to ring, make sure your audiences know that you’re ready to take their call. In the days of landlines, if customers wanted a service, they simply took out the yellow pages and thumbed through the business listings until they found the service they were looking for. These days, your audience is much more likely to find you online, either through search engines or social media. But that doesn’t mean they aren’t looking for a human to answer their questions. If you’re hoping to drive more calls, make sure your ads are getting that idea across clearly and directly. For example, if your business offers free estimates, make sure that message is prominent in the ad with impossible-to-miss text reading, “For a free estimate, call now,” with easy access to your number. And to make sure customers stay on the line, let them know their call will be answered by a human rather than a robot reciting an endless list of options.

If your customer found your landing page via search, there’s a majority percent chance they’re on a mobile device. While mobile accounted for just 27% of organic search engine visits in Q3 of 2013, its share increased to 57% as of Q4 2018. That’s great news for businesses looking to boost calls, since mobile users obviously already have their phone in hand. However, forcing users to dig up a pen in order to write down your business number only to put it back into their phone adds an unnecessary extra step that could make some users think twice about calling. Instead, make sure mobile landing pages offer a click to call button that lists your number in big, bold text. Usually, the best place for a click to call button is in the header of the page, near your form, but it’s best practice to A/B test button location and page layouts a few different ways in order to make sure your click to call button can’t be overlooked.

Since 2014, local search queries from mobile have skyrocketed in volume as compared to desktop. In 2014, there were 66.5 billion search queries from mobile and 65.6 billion search queries from desktop. Now in 2019, desktop has decreased slightly to 62.3 billion — while mobile has shot up to 141.9 billion — nearly a 250% increase in five years. Mobile search is by nature local, and vice versa. If your customer is searching for businesses hoping to make a call and speak to a representative, chances are, they need some sort of local services. For example, if your car breaks down, you’ll probably search for local auto shops, click a few ads, and make a couple of calls. It would be incredibly frustrating if each of those calls ended up being to a business in another state. Targeting your audience by region can ensure that you offer customers the most relevant information possible. If your business only serves customers in Kansas, you definitely don’t want to waste perfectly good ad spend drumming up calls from California. If you’re using Google Ads, make sure you set the location you want to target. That way, you can then modify your bids to make sure your call-focused ads appear in those regions.

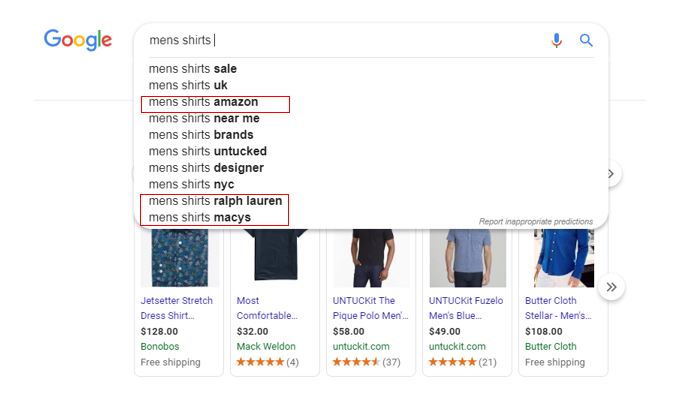

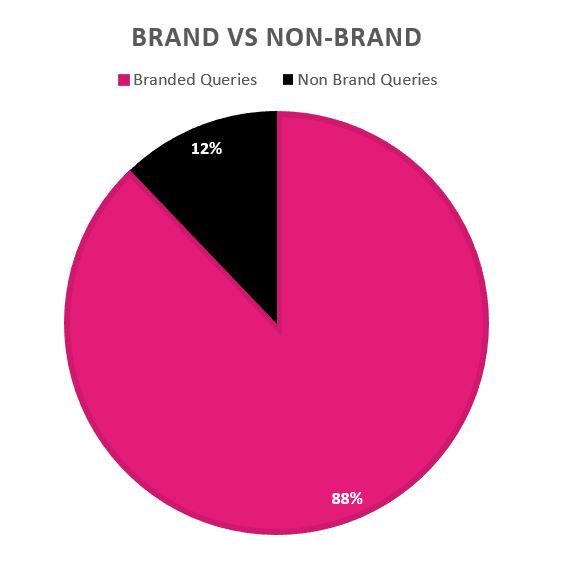

Keeping up with where your calls are coming from in the physical world is important, but tracking where they’re coming from on the web is just as critical. Understanding which of your calls are coming from ads as well as which are coming from landing pages is an important part of optimizing paid search. Using a call tracking and analytics solution alongside Google Ads can help give a more complete picture of your call data. And the more information you can track, the better. At a minimum, you should make sure your analytics solution captures data around the keyword, campaign/ad group, and the landing page that led to the call. But solutions like Invoca also allow you to capture demographic details, previous engagement history, and the call outcome to offer a total picture of not just your audience, but your ad performance. For more information on how to use paid search to drive calls, check out Invoca’s white paper, “11 Paid Search Tactics That Drive Quality Inbound Calls.” The post How to optimize paid search ads for phone calls appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/04/16/optimize-paid-search-phone-calls/ Search queries for your brand name, called “brand searches,” are among the most important keywords in a keyword portfolio. Even so, marketers are not often paying as much attention to these types of queries as they should. While juicy high-volume non-branded queries are exciting, providing your audience and customers with helpful brand information it is an equally thrilling prospect. The truth is that users for many brands, big and small, are commonly underserved by branded search results they find. In this post, we’ll show you exactly how to conduct a branded search audit, identify failing results, and implement improvements. This audit is one that we perform for our clients at Stella Rising. Now you can do the same for your clients or website. The first part of the audit is about setting the stage. Do you know what ratio of your traffic is the result of non-brand queries vs. brand queries? You should. In this section of the audit, you’ll set the stage to discuss the importance of what you identify. Why are branded queries so important?Branded queries are among the most important keywords you can optimize as they represent a brand-aware audience that is more likely to convert. In fact, many of the people searching for your brand are already customers looking for information or looking to purchase again. It’s easy (generally)Unlike most things in SEO, Google wants you to rank well for your own brand terms. Whenever we see branded searches that are failing users, it’s usually easy to fix. Often, it’s as simple as creating a new page or changing a meta tag. Other times it can be more challenging, such as when brands have significant PR and/or brand reputation issues. That said, in most cases, branded search queries are among the easiest to rank for. Don’t overlook them. Correlation to rankings and personalizationWhile the search volume of a domain name is not a confirmed ranking factor, Google does hold a patent that may indicate the more searches a brand receives, the more likely that brand is to to be seen as high quality by Google. This, in turn, may help them to rank for associated non-brand terms. What does that mean? Essentially, if tens of thousands of people search your brand name + couch, you may be more likely to rank for “sofas.” A number of 2014 Wayfair commercials brilliantly capitalized on this opportunity. The commercials literally told people to Google “Wayfair my sofa” or “Wayfair my kitchen,” thus tying signals around their entity to other non-brand entities. How Wayfair brilliantly linked its advertising with its branded search termsBranded searches can also impact autocomplete which in turn can impact more branded searches, feeding into the connection described above. When users click one of these autocompleted search suggestions, they execute a “branded search,” which then signals to Google that the entities are related. For example, “Amazon, Ralph Lauren or Macy’s” with “Men’s shirts”.

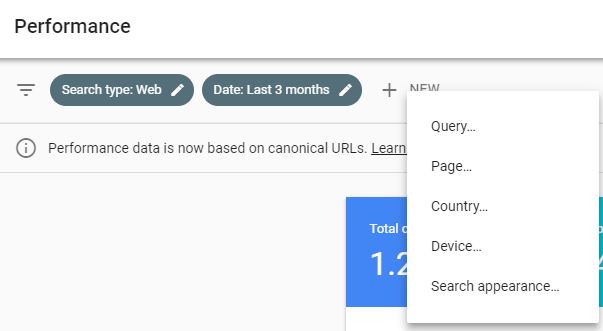

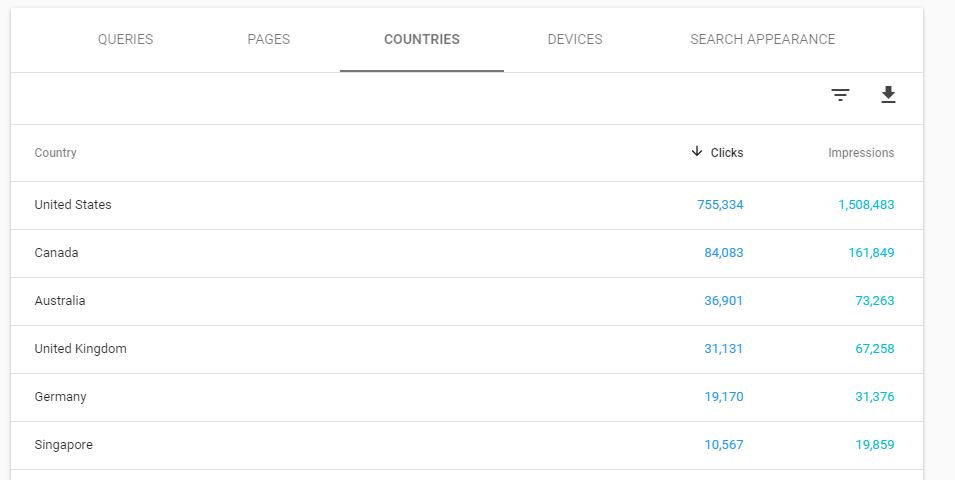

Branded search audit part one: Setting the stageIn part one of this audit, you will provide branded search landscape insights. Like any good show, you need to set the stage for the information you are about to present. This will help to win buy-in, prioritize your efforts, and keep you strategically on track. What is your ratio of branded search?For this part, you’ll need to head to the Google Search Console and open up your performance report for the last three months. Start by setting up a filter for your brand name. If you have a brand name that people commonly misspell, then you will want to take that into account.* Click the “+ New” button and then click “Query.” Filter by “Queries Containing” and not “Query is Exactly.”

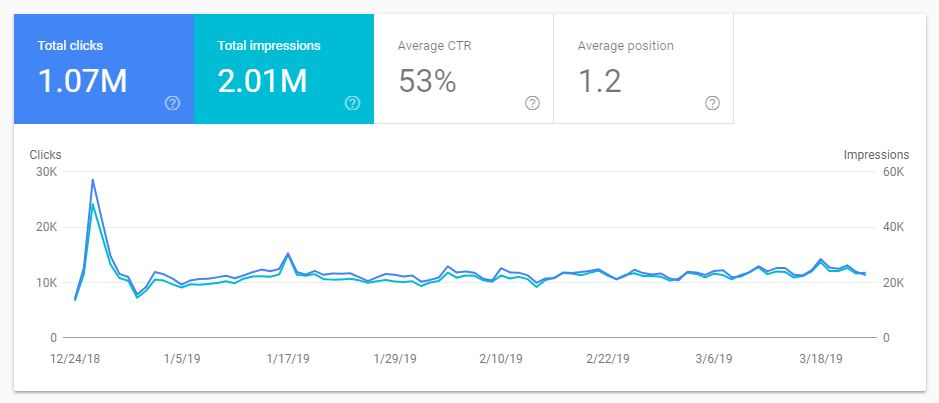

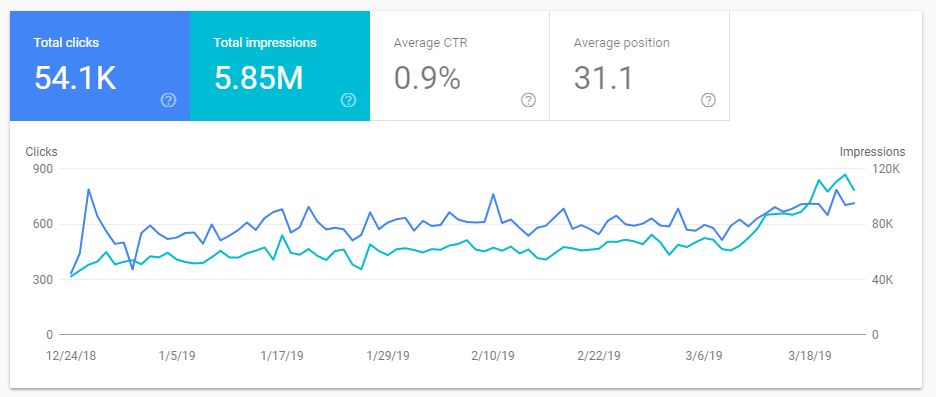

*Advanced Tip: The above instructions only account for your brand name, not product names that are proprietary to your brand. Consider using the API to pull down your GSC report and do filtering for those as well. You’ll then see the total number of branded clicks and impressions your site receives.

Now change your filter to “Queries not containing,” this will give you roughly the number of non-branded clicks the website has received from Google search.

Take this data and bring it into Excel. From there, create a pie chart to visually demonstrate the ratio of branded to non-branded clicks the website receives.

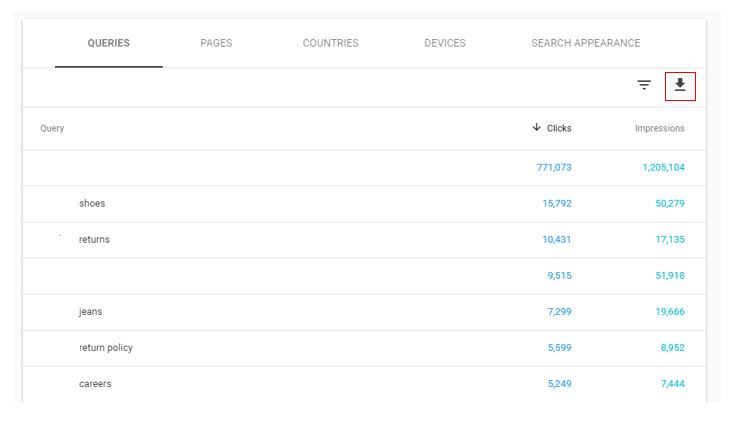

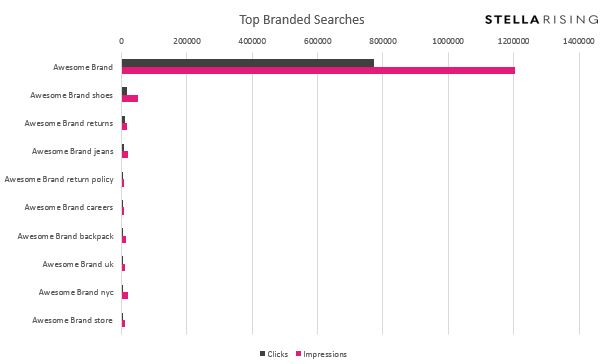

What are the top branded queries driving traffic?The next and perhaps one of the most critical questions for this analysis is, “What are the top branded queries?” Understanding this is important because the next step in this audit is to manually search each of the top ten (or more) queries. Then you will understand which queries will better serve users. While this analysis is simple, we found that creating a simple visual with the data makes for a better story in your presentation. To do this, download the data by clicking the down arrow at the top of the queries table.

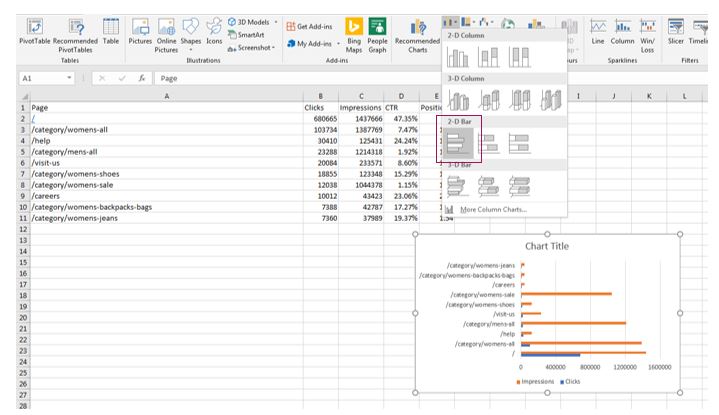

Once you have the data in Excel, you can create a cool visual using the 2D bar chart graph.

What are the top pages receiving branded clicks?Similar to the above analysis, you will need to download the top pages from the “pages” tab in your performance report.

Where do branded searches come from?For some brands, it is worth considering where branded searches come from geographically. To find this information, set your filter to include brand queries and then click the “countries” tab in the Google Search Console.

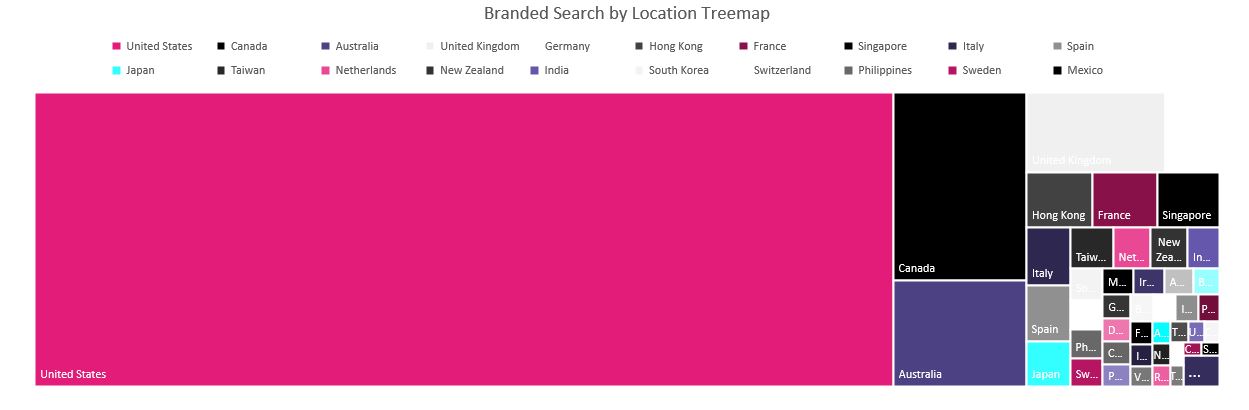

Now we could just put that image into a report, but what fun would that be? Instead, download the data and bring it into Excel to create a visual. You can do this a number of ways, but we recommend either using a map or a tree-map which generates a cool way of looking at the data. A pie chart would do but is not as visually appealing. Open Excel and highlight the data you downloaded. Click “Insert”, then click either on “Maps” or the second box for a treemap.

Tree-map visualization of country click data

How has branded search trended over time?The last and perhaps the most essential question outside of which queries get clicks is, “how has branded search trended over time?” This trend is a hugely important question for any brand that has or is currently investing in brand building efforts, media, PR, or even non-branded paid search campaigns. During this section of the audit, we have seen brands that previously invested millions in traditional print flatten out for years. Alternatively, we’ve also seen DTC brand’s growth hit a wall. Knowing where brand interest stands is a data point that is vital to all brands and their performance marketing strategies. Whether you are conducting a branded search audit or not, tracking the branded search volume is something that should be on the KPI list for marketers across a multitude of disciplines. Branded search audit part two: Identifying issues in branded searchDo you have any brand image issues?Sorry, no SEO magic here. If your brand, founder or employee made headlines (and not the good ones you send to mom) your only strategy is to do your best to rectify the situation. Take, for example, a brand we came across which was at one point dealing with first page Google results full of nasty headlines. The headings covered how the center had allegedly abandoned more than 40 research animals on an island. When I first heard this story and considered the best plan forward I thought, “Can they just decide not to abandon the animals and find them homes?” In fact, that’s exactly what they did. As a result, the negative stories were replaced over time with positive ones about how the center reached an agreement to find a sanctuary for the animals. The first page of results for their brand name is now squeaky clean. The moral of this story is, never abandon animals on an island. But, if you do, and get dragged through the mud for it, no amount of SEO will save you. Are there any abandoned research animals in your organization? If so, get them off the island. In other less metaphorical terms, part of auditing brand search is brand reputation. As we all now know, “E-A-T” and reputation are hugely important to Google. Deal with business practice issues head-on and find the best resolution possible. John Mueller has reminded us that Google has a really good memory and is not apt to forget anything about your brand history. Stains on your brand reputation can really take a toll and have lasting power. The best offense here is a good defense. Searching the top ten queriesNow for the part of the audit where we find the broken stuff. Take your list of top terms and manually execute the search in a private browsing window for each term. Record your results and a screenshot of the SERP. What are we looking for?

Searching other navigational queriesOther areas to check out are navigational or known brand queries that help users navigate and interact with your brand. For example: