|

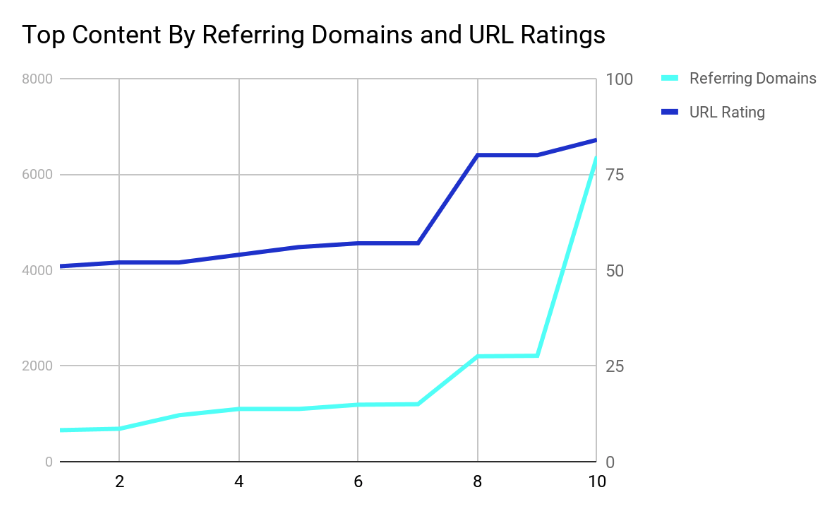

A new study by Kaizen has revealed that content that performs well for backlinks does not necessarily perform well for social shares and vice versa. Analyzing over 2300 pieces of finance content, Kaizen has found the best performing pieces of content for URL rating, the number of referring domains, and the number of social shares. Nine out of the top 10 pieces of content with the highest URL ratings also featured in the top 10 pieces of content for the most referring domains.

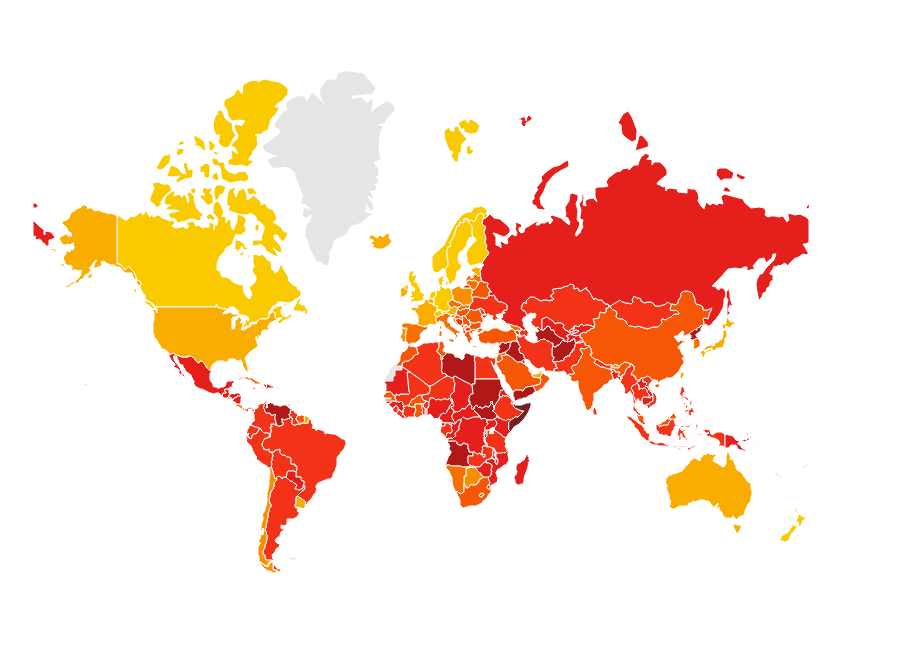

This shows a clear correlation between the two. The higher the quantity of referring domains, the higher the quality of URL rating. The best-performing piece of content for both URL rating and the number of referring domains was the Corruptions Perceptions Index 2017, by Transparency International. The campaign highlighted the countries that are or are not making progress in ending corruption, finding that the majority of countries were making little or no progress.

But what made this campaign succeed so well in SEO terms?1. It has global appealBy placing emphasis on visual components of content, the campaign is easily understandable without language and is based on data from across the world, making it globally link-worthy. 2. It is emotional contentThe piece evokes an emotional response from the element of corruption and the fact that the majority of countries in the world are making little or no progress in ending corruption. 3. It is evergreen content“Evergreen content” is content that is not tied to a specific date or time of the year and can be outreached (and can gain links) at any time. In addition, Transparency International is able to update the data each year, creating a new story for outreach and increasing its chances of landing links. By combining these typical elements of viral content, the Corruption Index earned 6372 referring domains, and a URL rating of 84, making it the most successful piece of finance content in the study. Use these three aspects as a checklist for your own content, and it should emulate great results. Social sharesThe Corruption Perception Index also ranked in the top 10 pieces of content for social shares, with a grand total of nearly 48,000. However, it is only one of two pieces to rank in the top 10 for URL ratings or referring domains and social shares. There is much less correlation between social share success and backlink success, showing that they are not directly or significantly linked. The most successful piece of content for social shares was this car insurance calculator by Confused.com, with 91,000 total social shares. This piece of content, as well as the majority of the top 10, is B2C-focused. In comparison, the URL rating and referring domains lists are more technical and B2B-focused. Therefore, B2B content performs better for SEO strategies focused on backlinks, whereas B2C tools and guides suitable for customers rather than businesses perform better for social shares. The Corruption Perception Index is an exception, performing well for both backlinks and social shares. However, by focusing on analytical data from experts and business people, and by providing relevant data for both businesses and customers, it has equal value for both B2B and B2C audiences. In conclusionDon’t expect the same piece of content to perform well for both backlinks and social shares. But, if you are able to create content that provides equal value for both B2B and B2C communities, you will have the opportunity for multiple outreach strategies, with resounding value throughout the industry. Nathan Abbott is Content Manager at Kaizen. The post Backlinks vs social shares: How to make your content rank for different SEO metrics appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/03/18/backlinks-vs-social-shares-how-to-make-your-content-rank-for-different-seo-metrics/

0 Comments

It has been a while since Google has had a major algorithm update. They recently announced one which began on the 12th of March.

What changed? It appears multiple things did. When Google rolled out the original version of Penguin on April 24, 2012 (primarily focused on link spam) they also rolled out an update to an on-page spam classifier for misdirection.

And, over time, it was quite common for Panda & Penguin updates to be sandwiched together. If you were Google & had the ability to look under the hood to see why things changed, you would probably want to obfuscate any major update by changing multiple things at once to make reverse engineering the change much harder. Anyone who operates a single website (& lacks the ability to look under the hood) will have almost no clue about what changed or how to adjust with the algorithms. In the most recent algorithm update some sites which were penalized in prior "quality" updates have recovered.

Though many of those recoveries are only partial.

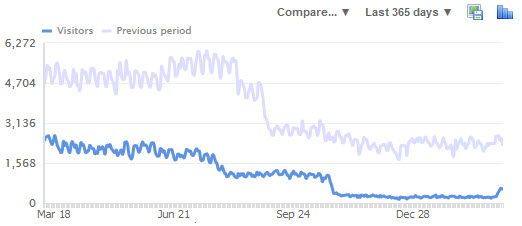

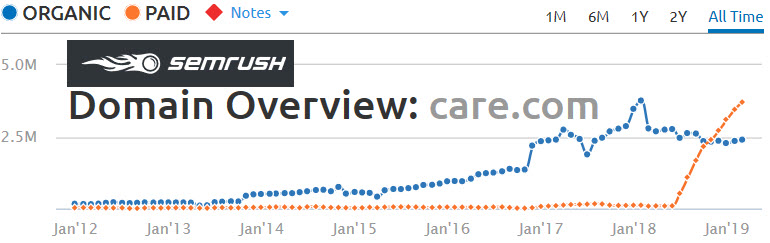

Many SEO blogs will publish articles about how they cracked the code on the latest update by publishing charts like the first one without publishing that second chart showing the broader context. The first penalty any website receives might be the first of a series of penalties. If Google smokes your site & it does not cause a PR incident & nobody really cares that you are gone, then there is a very good chance things will go from bad to worse to worser to worsterest, technically speaking.

Absent effort & investment to evolve FASTER than the broader web, sites which are hit with one penalty will often further accumulate other penalties. It is like compound interest working in reverse - a pile of algorithmic debt which must be dug out of before the bleeding stops. Further, many recoveries may be nothing more than a fleeting invitation to false hope. To pour more resources into a site that is struggling in an apparent death loop. The above site which had its first positive algorithmic response in a couple years achieved that in part by heavily de-monetizing. After the algorithm updates already demonetized the website over 90%, what harm was there in removing 90% of what remained to see how it would react? So now it will get more traffic (at least for a while) but then what exactly is the traffic worth to a site that has no revenue engine tied to it? That is ultimately the hard part. Obtaining a stable stream of traffic while monetizing at a decent yield, without the monetizing efforts leading to the traffic disappearing. A buddy who owns the above site was working on link cleanup & content improvement on & off for about a half year with no results. Each month was a little worse than the prior month. It was only after I told him to remove the aggressive ads a few months back that he likely had any chance of seeing any sort of traffic recovery. Now he at least has a pulse of traffic & can look into lighter touch means of monetization. If a site is consistently penalized then the problem might not be an algorithmic false positive, but rather the business model of the site. The more something looks like eHow the more fickle Google's algorithmic with receive it. Google does not like websites that sit at the end of the value chain & extract profits without having to bear far greater risk & expense earlier into the cycle. Thin rewrites, largely speaking, don't add value to the ecosystem. Doorway pages don't either. And something that was propped up by a bunch of keyword-rich low-quality links is (in most cases) probably genuinely lacking in some other aspect. Generally speaking, Google would like themselves to be the entity at the end of the value chain extracting excess profits from markets. This is the purpose of the knowledge graph & featured snippets. To allow the results to answer the most basic queries without third party publishers getting anything. The knowledge graph serve as a floating vertical that eat an increasing share of the value chain & force publishers to move higher up the funnel & publish more differentiated content. As Google adds features to the search results (flight price trends, a hotel booking service on the day AirBNB announced they acquired HotelTonight, ecommerce product purchase on Google, shoppable image ads just ahead of the Pinterest IPO, etc.) it forces other players in the value chain to consolidate (Expedia owns Orbitz, Travelocity, Hotwire & a bunch of other sites) or add greater value to remain a differentiated & sought after destination (travel review site TripAdvisor was crushed by the shift to mobile & the inability to monetize mobile traffic, so they eventually had to shift away from being exclusively a reviews site to offer event & hotel booking features to remain relevant). It is never easy changing a successful & profitable business model, but it is even harder to intentionally reduce revenues further or spend aggressively to improve quality AFTER income has fallen 50% or more. Some people do the opposite & make up for a revenue shortfall by publishing more lower end content at an ever faster rate and/or increasing ad load. Either of which typically makes their user engagement metrics worse while making their site less differentiated & more likely to receive additional bonus penalties to drive traffic even lower. In some ways I think the ability for a site to survive & remain though a penalty is itself a quality signal for Google. Some sites which are overly reliant on search & have no external sources of traffic are ultimately sites which tried to behave too similarly to the monopoly that ultimately displaced them. And over time the tech monopolies are growing more powerful as the ecosystem around them burns down:

Businesses which have sustainable profit margins & slack (in terms of management time & resources to deploy) can better cope with algorithmic changes & change with the market. Over the past half decade or so there have been multiple changes that drastically shifted the online publishing landscape:

Each one of the above could take a double digit percent out of a site's revenues, particularly if a site was reliant on display ads. Add them together and a website which was not even algorithmically penalized could still see a 60%+ decline in revenues. Mix in a penalty and that decline can chop a zero or two off the total revenues. Businesses with lower margins can try to offset declines with increased ad spending, but that only works if you are not in a market with 2 & 20 VC fueled competition:

And sometimes the platform claws back a second or third bite of the apple. Amazon.com charges merchants for fulfillment, warehousing, transaction based fees, etc. And they've pushed hard into launching hundreds of private label brands which pollute the interface & force brands to buy ads even on their own branded keyword terms. They've recently jumped the shark by adding a bonus feature where even when a brand paid Amazon to send traffic to their listing, Amazon would insert a spam popover offering a cheaper private label branded product:

Buying those Amazon ads was quite literally subsidizing a direct competitor pushing you into irrelevance. As the market caps of big tech companies climb they need to be more predatious to grow into the valuations & retain employees with stock options at an ever-increasing price. They've created bubbles in their own backyards where each raise requires another. Teachers either drive hours to work or live in houses subsidized by loans from the tech monopolies that get a piece of the upside (provided they can keep their own bubbles inflated).

The above sort of dynamics have some claiming peak California:

If you live hundreds of miles away the tech companies may have no impact on your rental or purchase price, but you can't really control the algorithms or the ecosystem. All you can really control is your mindset & ensuring you have optionality baked into your business model.

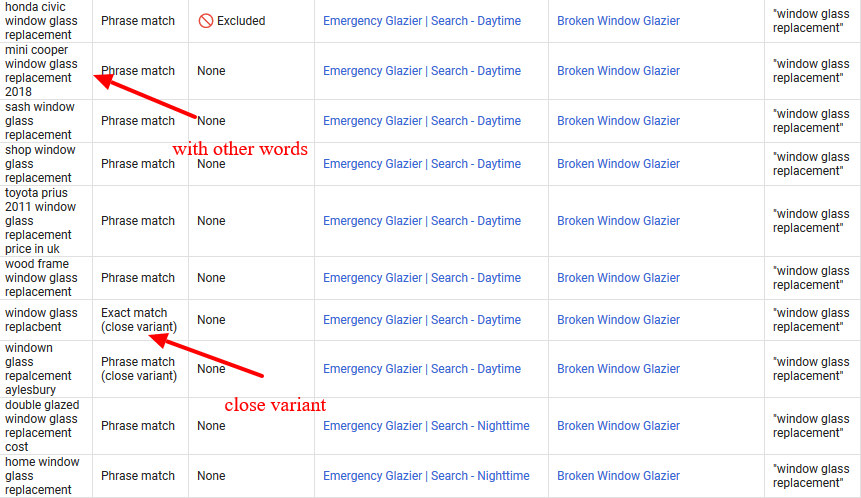

As the update ensues Google will collect more data with how users interact with the result set & determine how to weight different signals, along with re-scoring sites that recovered based on the new engagement data. Recently a Bing engineer named Frédéric Dubut described how they score relevancy signals used in updates

That same process is ongoing with Google now & in the coming weeks there'll be the next phase of the current update. So far it looks like some quality-based re-scoring was done & some sites which were overly reliant on anchor text got clipped. On the back end of the update there'll be another quality-based re-scoring, but the sites that were hit for excessive manipulation of anchor text via link building efforts will likely remain penalized for a good chunk of time.

Categories:

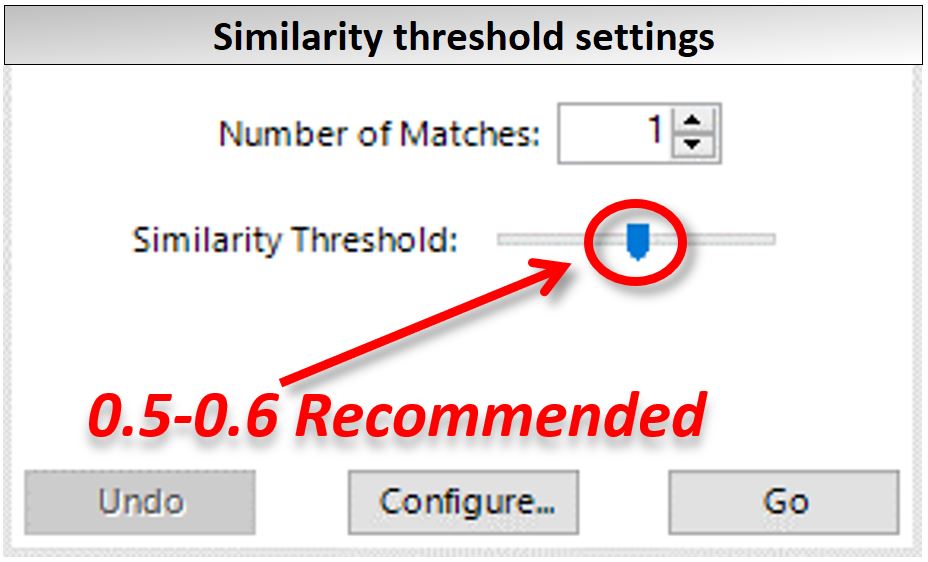

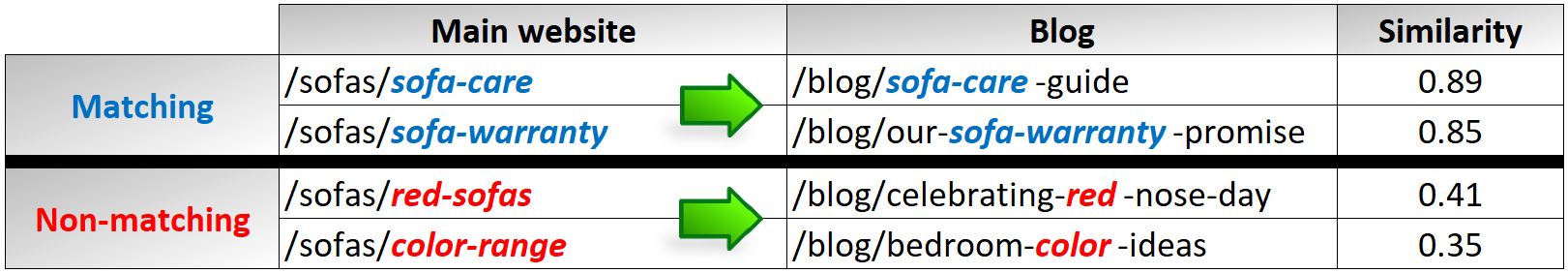

from http://www.seobook.com/google-florida-20-algorithm-update-early-observations In recent years, the nature of SEO has become more and more data-driven, paving the way for innovative trends such as AI or natural language processing. This has also created opportunities for smart marketers, keen to use everyday tools such as Google Sheets or Excel to automate time-consuming tasks such as redirect mapping. Thanks to the contribution of Liam White, an SEO colleague of mine always keen on improving efficiency through automation, I started testing and experimenting with the clever Fuzzy Lookup add-in for Excel. The tool, which allows fuzzy matching of pretty much any set of data, represents a flexible solution for cutting down manual redirects for 404 not-found pages and website migrations. In this post, we’ll go over the setup instructions and hands-on applications to make the most of the Excel Fuzzy Lookup for SEO. 1. Setting up Excel Fuzzy LookupGetting started with Fuzzy Lookup couldn’t be easier — just visit the Fuzzy Lookup download page and install the add-in onto your machine. System requirements are quite basic. However, the tool is specifically designed for Windows users — so no Mac support for the moment. Unlike the not-exact match with Vlookup (which matches a set of data with the first result), Fuzzy Lookup operates in a more comprehensive way, scanning all the data first, and then providing a fuzzy matching based on a similarity score. The score itself is easy to grasp, with a score of one being a perfect match, for instance. This score then decreases with the matching accuracy down to a score of zero where there is no match. Regarding this, it’s advisable not to venture below the 0.5 to 0.6 similarity threshold in the settings, as the results are not consistent enough for a site migration or 404 redirects purpose below that limit.

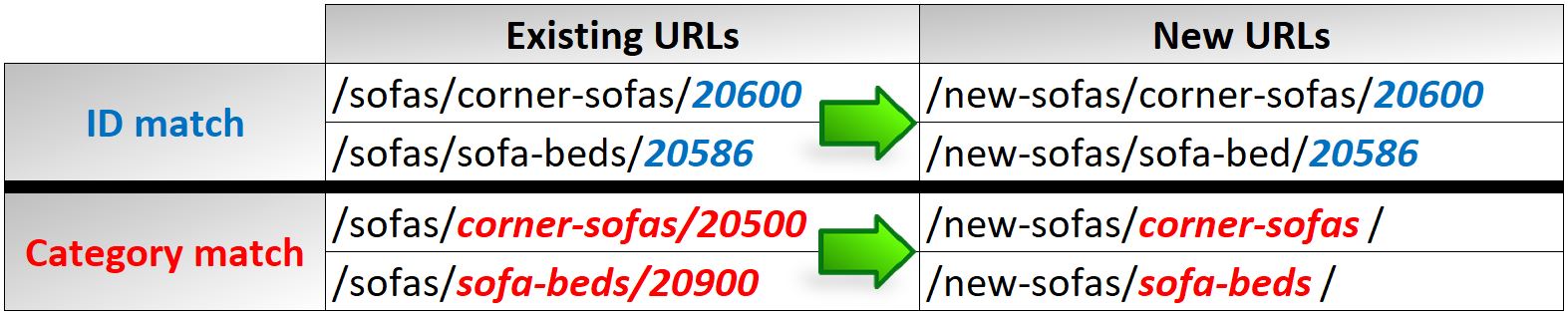

For greater accuracy, it’s also desirable to trim the domain (or staging site equivalent) from the URLs, making sure that the similarity score is not altered by too many commonalities. For more information about the setup, you can also refer to this Fuzzy Lookup guide. 2. Redirect mapping automation and its benefitsConsidering the time necessary to familiarize with the site, categories and products/services, it’s safe to assume that a person would manually match two URLs roughly every thirty seconds. If that doesn’t sound too bad, consider that it would take between five to eight hours for a website of 1,000 URLs. This would make it quite a tedious and time-consuming task. Bearing in mind that Fuzzy Lookup can provide nearly immediate results with a reliable fuzzy matching for at least 30 to 40 percent of the URLs, then this approach starts to appear interesting. If we consider the savings in terms of time as well, this would translate to about three hours for a small site or over ten hours for large ecommerce site. 3. Dealing with site migration redirectsIf you are changing the structure of a site, consolidating more domains into one, or simply switching to a new platform, then redirect mapping for a website migration is definitely a priority task on your list. Assuming that you already have a list of existing pages plus the new site URLs, then you are all set to go with Fuzzy Lookup for site migrations. Once you have set up the two URL lists in two separate tables, you can fire up the Fuzzy Lookup and order the matched URLs by the similarity score. In my tests, this has proven to be an effective, time-saving solution, helping in cutting down the manual work by several hours. As displayed in the screenshot below, the fuzzy matching excelled with product codes and services/goods (such as 20600 and corner-sofas, for example). This allows the matching of IDs with IDs, and the URL with the parent category, in the case where an identical ID is not available.

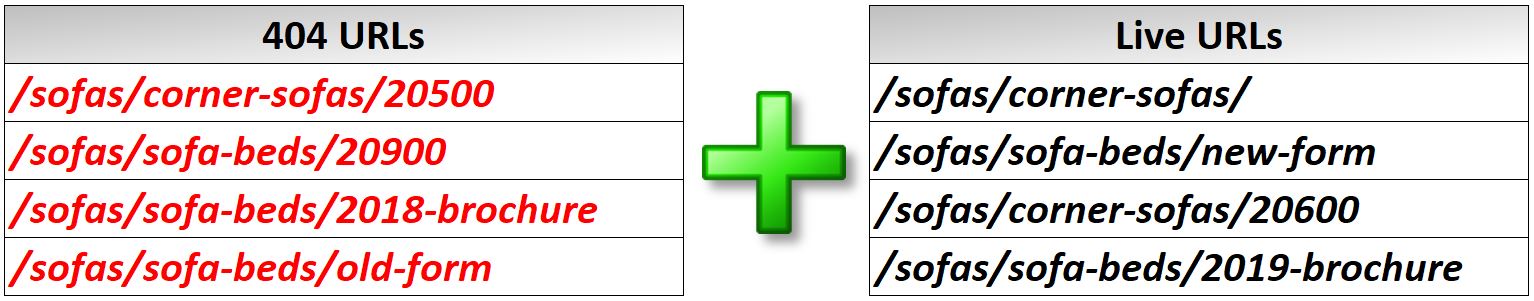

4. 404 error redirectsPages with a 404 status code are part of the web and no website is immune, hosting at least a few of them. Having said that, 404 errors have the potential of creating problems, hurting the user experience and SEO. Fuzzy Lookup can help with that, requiring just one simple addition a recent crawl of your site to extract the list of live pages, like the example below:

The fuzzy matching works pretty well in this instance too, matching IDs with IDs, and leaving the match to the most relevant category if a similar product/service is not live on the site. As per the site migrations, the manual work is not completely wiped out, but it’s made a whole lot easier than before. 5. Bonus: Finding gap/similarities in the blogAnother interesting application for Excel Fuzzy Lookup can be found in analyzing the blog section. Why? Simply because if you’re not in charge of the blog then you are not likely to be aware of what’s in it now, and what has been written in the past. This solution works in two ways as well, because if a similarity is found, then you have the confirmation that the topic has been already covered. If not, this means that there’s still room for creating relevant content that can be linked to the service/product category to improve organic reach as well.

Wrapping upTime is money, and when it comes to dealing with large numbers of URLs that need to be redirected, a solution like Fuzzy Lookup can help you in cutting down the tedious manual redirect mapping. Thus, why not embrace fuzzy automation and save time for more exciting SEO tasks? Marco Bonomo is an SEO & CRO Expert at MediaCom London. He can be found on Twitter @MarcoBonomoSEO. The post Excel Fuzzy Lookup for SEO: Effortless 404 and site migration redirects appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/03/15/excel-fuzzy-lookup-for-seo-effortless-404-site-migration-redirects/ There is certainly a big pool of choices for agencies to choose from when it comes to picking a website audit tool. There are standalone tools and those that come as part of a package. Some audit tools go through all pages of a website while others just give an overview of a specific page. And there are some tools that claim to be developed specifically for agencies but in reality can’t cope with the requirements that are very important to digital agencies and digital commerce services. In this article, we are going to dive deep into what exactly agencies need when it comes to website audits and what to look for when choosing the tool for this task. The audit has to scan deeply and provide a detailed reportLet’s start with reviewing the capabilities of website audit tools in terms of how deeply and completely they audit websites. Google ranks pages, not entire sites. So, logically, a website audit has to analyze each page separately. But it’s only at a glance, so to speak, because Google considers everything:

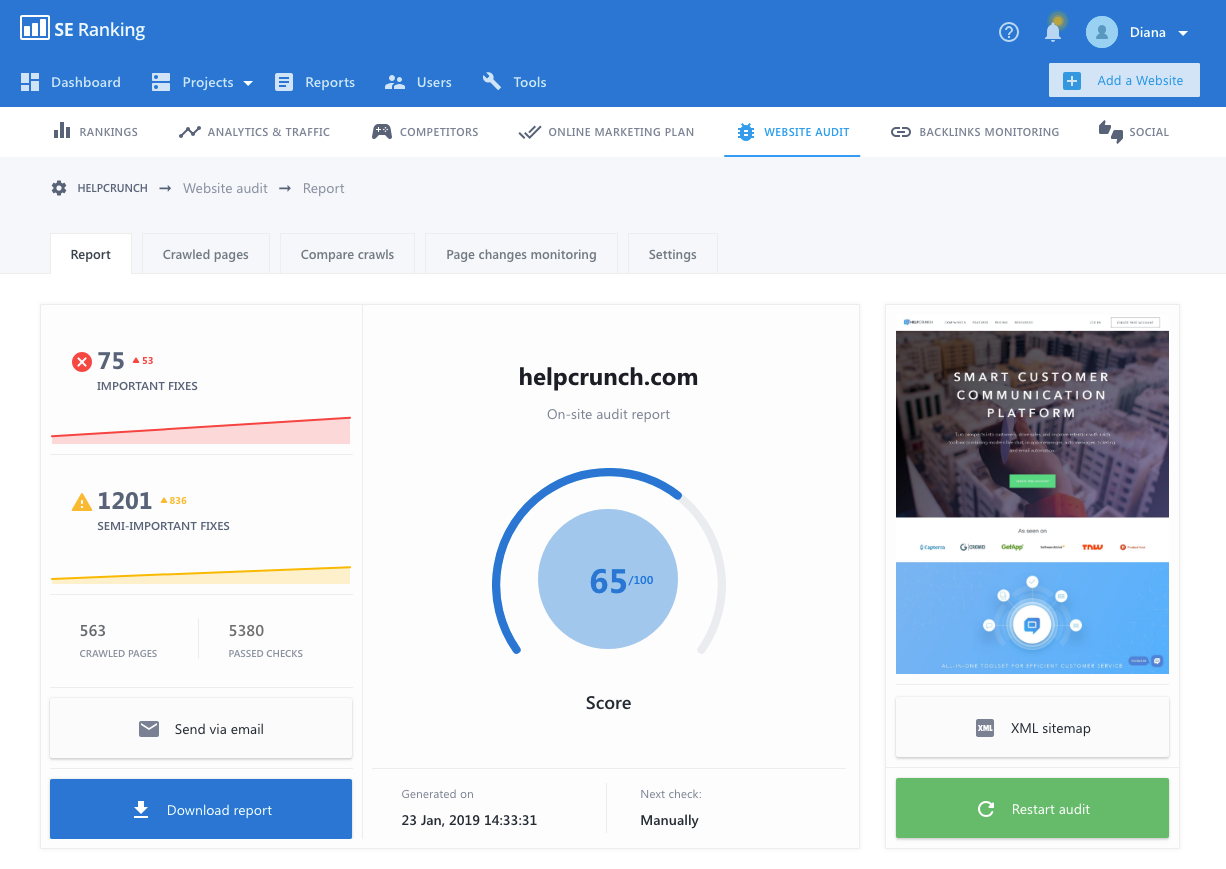

Advice #1: Choose a website audit with a customizable settingSelect a website audit tool that is powerful enough to scan as deeply as possible (scan all pages, subdomains, and even test pages), and with a high level of detailing so that you can see what pages require your immediate attention and which ones can wait for a little. My favorite website audit tool in terms of control and completeness is that of SE Ranking. These guys really went the extra mile in creating a tool that lets users set the scanning depth and speed. You can decide what pages to audit (you can upload URLs in an excel file, configure whether the scanning process should follow or ignore robots.txt rules, or set up your own rules). Plus you can choose the maximum number of pages to audit and define what should be treated as an error at the main negotiable optimization points.

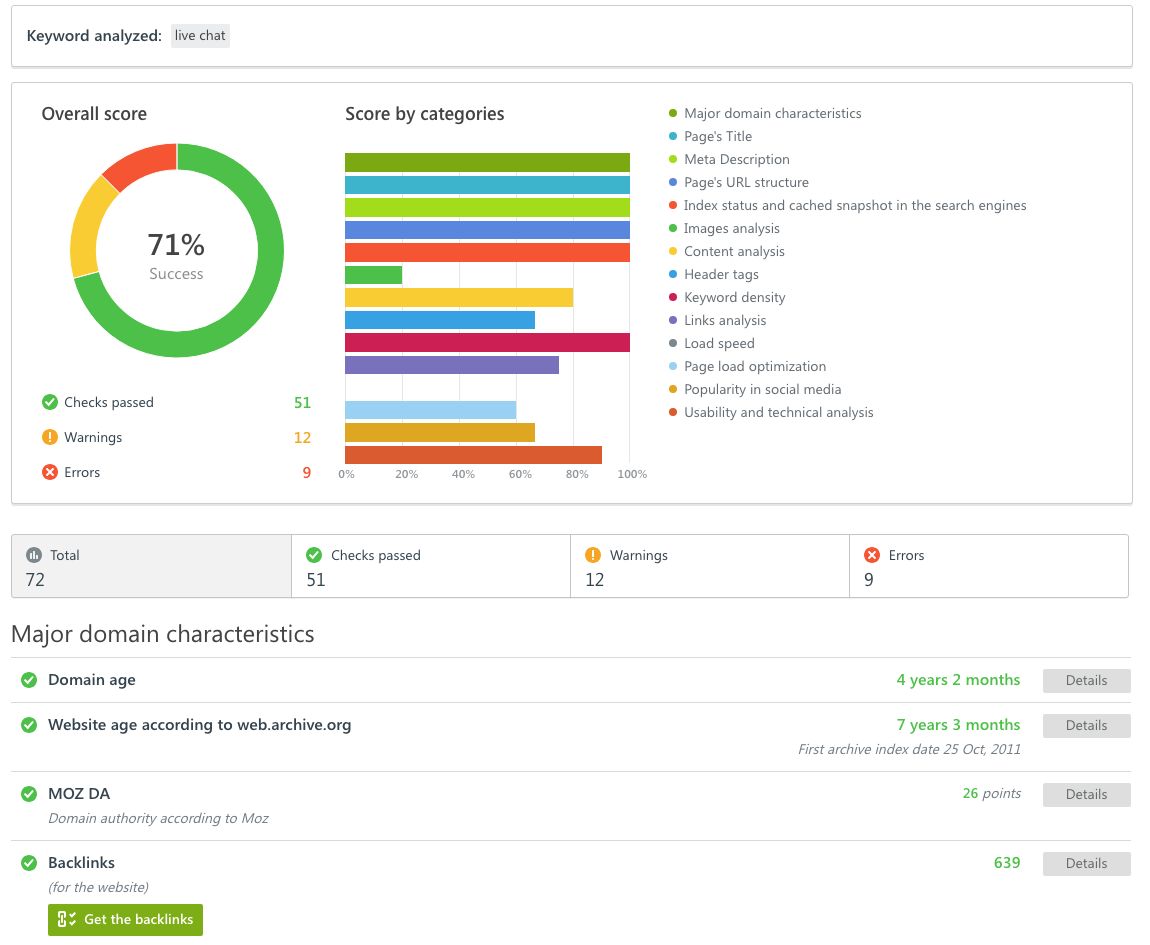

I also like the fact that all their reports are white-labeled and highly customizable. On top of that, I love that the functionality of their website audit includes the option to create an XML sitemap right there in the tool and in just a few clicks. Lead generation and website auditSEO as part of marketing sure is a way to drive and convert traffic. In that perspective, a website audit helps to discover errors and fix problems that prevent website pages from being ranked higher in SERPs. In other words, you are using a website audit to make your website make more money. But for those that make a living out of digital marketing, a website audit is also a tool that generates income by itself so to speak. Advice #2: Choose an audit tool that comes with embed options (aka widgets) that will generate leads for you in exchange for a website or an on-page auditFor example, SE Ranking offers a tool called lead generator which is a web form installed on your site that provides a free on-page audit to anyone that fills out the form (I also like the one from MySite Auditor). The audit report comes in a nice, easy-to-read format that anyone can understand. Such widgets bring value to your visitors and build trust in your services. And for you qualified leads and a list of problems their pages have which is a great starting point for a nurturing conversation. When choosing which widget to pick, check the following:

Ideally, the lead generating option has to be easily customizable and be able to integrate with your CRM, that way you can incorporate it nicely into your site and your business operations. SEO Softwares that offer a lead generation form: WooRank, SE Ranking, MySite Auditor. White-labeled SEO platform with a website auditPresenting your SEO services based on data collected by your own technology is an incredibly powerful way to create brand ambassadors for your agency. You can use a software that offers a white labeled website audit and customizable reporting only but I found those to come with a lot of limitations. For an agency, a full white label option is the best choice regardless of whether you want to do a website audit or present an array of your digital services in a way that reflects your identity in the most complete manner. Advice #3: Make sure the software you pick for website audit offers a white label optionBuilding credibility and trust with your clients is the number one priority for agencies. As found in multiple sources, over about 70% percent of a business that comes to agencies is generated through referral which is built on trust and loyalty. A few suggestions on how to choose software with the white label option:

Another valuable tip: Use your own domain/subdomain for white label SEO. That way the services you are providing will look authentic and genuine with no traces back to the parent software. SEO Softwares where white label comes with the subscription: SE Ranking, WebCEO, BrightLocal, NinjaCat. More software are listed here. Comparison data in a website auditI don’t know about you but the majority of my clients are expecting results the next day after signing the retainer for my services. Their web pages should be at the top of the SERPs right away which, of course, is not possible. So what else can agencies do to justify their bread and butter for a good number of months before their efforts start yielding tangible results? I show dynamics. Advice #4: Make sure that the website audit tool you pick comes with comparative data and dynamics analysisWebsite Audit is a great tool for showing progress especially if the tool you choose provides comparison data and analytics over time. I like to show clients the initial report with all sorts of errors in there and then provide the cut from a different period of time in the month from the first reporting, two months, and so forth. Look how many errors we fixed, I tell my clients, how many things are optimized, and how many improvements we’ve implemented.

SEO softwares that offer a comparison audit: MySiteAuditor, SEMRush, ScreamingFrog, SEO Report Card. Reporting module in a website auditI can’t stress enough how important it is for agencies to be able to slice and dice data they are working with for a client in a visually appealing and informative format. I would say that reporting is a tool for keeping clients happy while having a close eye on the ROI of your SEO progress and investments. Advice #5: The website audit tool of your choice has to come with a robust and highly customizable reporting moduleOf course, any website audit comes with a report — how else would you see all the errors and data obtained as a result of the scanning? However, not all come with options that are absolutely necessary for agencies:

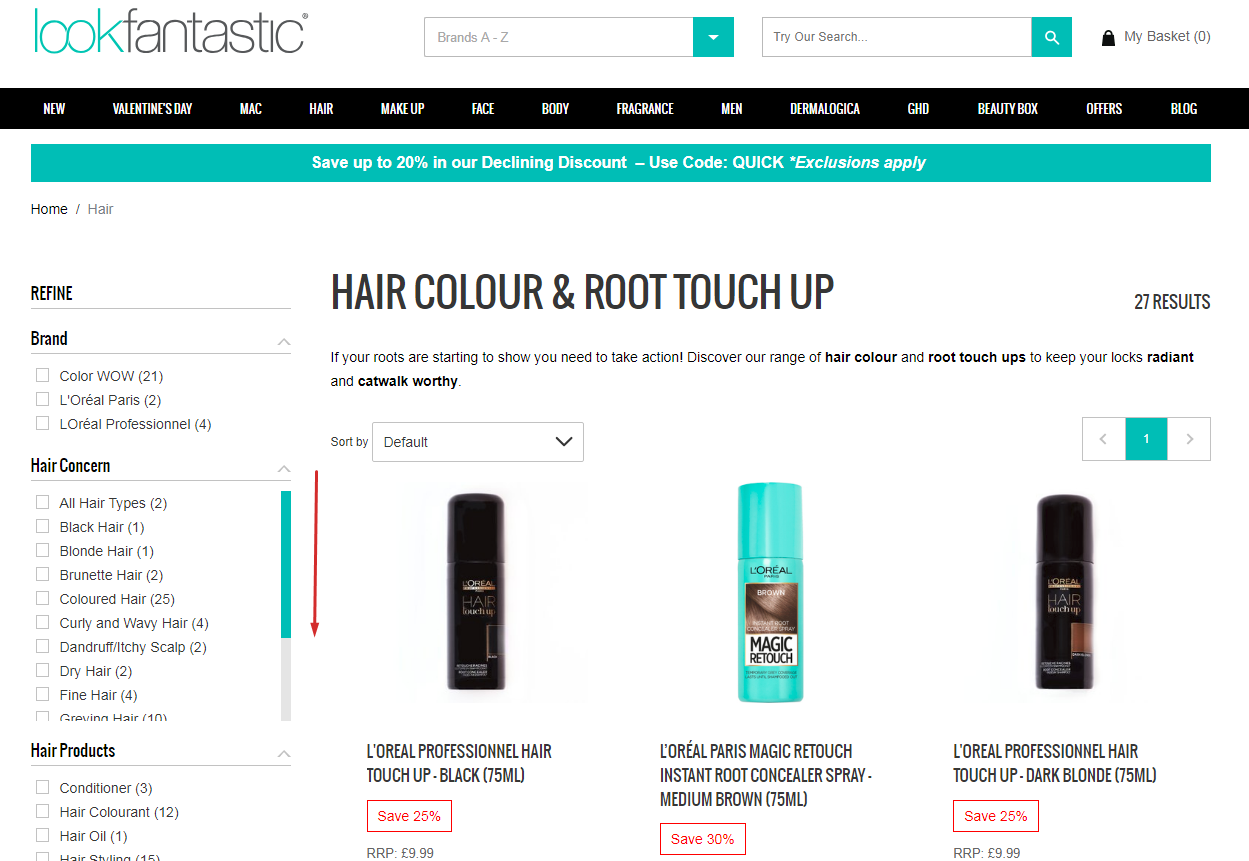

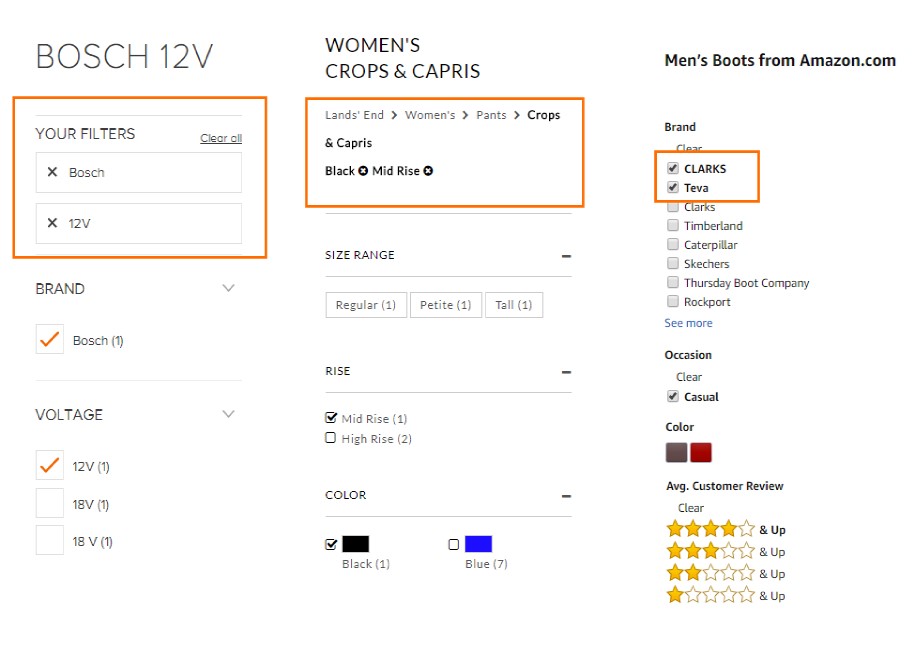

SEO software that offer robust reporting within the website audit: SE Ranking, MySiteAuditor, SEMRush, Ahrefs. A few words in conclusionI know some people say that agencies or marketing teams like to use website auditing software that do just that, run a website audit. But in my years of experience, I found it to be highly inefficient since there are so many tasks to do for a client, so many projects to handle. This contributes to the juggling between the interfaces, reports, and functionality that gets really annoying if not completely chaotic. What I do is, use the SEO platform that comes with everything and has a very powerful website audit module that complies with all the requirements I outlined above. It saves me time and money. And I do use the one that comes with a white label, so everything I present to my clients is deeply branded and rooted into my business values. Marco Bonomo is an SEO & CRO Expert at MediaCom London. He can be found on Twitter @MarcoBonomoSEO. The post How to pick the best website audit tool for your digital agency appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/03/15/how-pick-best-website-audit-tool/ Who can argue that building an SEO strategy is not a time-consuming thing? Keyword research, niche analysis, technical audit, link building — all these tasks are just a small part of an SEO’s daily routine. Willing to automate search engine optimization processes, experts use special tools and software. But it’s not always sufficient when analyzing the results Of course, solving some basic issues for a small website isn’t that difficult with quality SEO tools. On the other hand, if you work with several sites and analyze lots of data, you’ll need to find ways of saving your time. At this point, people may look into implementing other methods into their working process. Here usually come various SEO extensions and plugins. They are very convenient as you can activate them in one click right from the page you’re analyzing. However, extensions often have even fewer features than the SEO tool itself. If taking a closer look at the issue, there’s one more decision to be found. I’m talking about APIs, the method few people know how to use, missing the opportunity to benefit a lot. In this article, I’ll tell you what an API is, why you need it, and how to use it to fulfill SEO tasks. What is an API?API stands for an application programming interface. It’s a set of functions that lets users get access to the data or components of the tool. In other words, an API is a set of methods of communication among several applications. APIs may serve for various purposes. For instance, developers often use them to embed some objects into websites. If you see a piece of Google Maps on a site, it means that the Google Map API is being used there. The same may be done with apps or tools. Why does an SEO expert need this?The right API helps experts to simplify the whole process of data collection. Some SEO tools offer an opportunity for their customers to use their APIs and drive better results. It lets users integrate analytics provided by platforms into their custom interface tools. With an API, you can request data and get it, while not even managing the tool’s interface. Advantages of an API:

Four tasks you can better solve with an APIAs previously mentioned, APIs let you make your SEO research much more flexible than typical tools do. So, what tasks exactly do APIs help with, and how can you use them for maximum profit? While SEOs have various issues to deal with, there are different platforms created to facilitate keyword research, niche analysis, content curation, and evaluation of the results. Some of these tools provide APIs to make the research even more effective. Below you’ll find the tasks an API may help you cope with and the tools providing such a method for their customers. 1. Keyword research and batch analysis of websitesA comprehensive niche analysis and proper keyword research are the first tasks appearing in an SEOs’ to-do list when they get to a new project. SEO tools meet these needs very well. Unless you don’t want to spend your time analyzing each competitor or keyword individually. For this purpose, quality tools provide their APIs. With Serpstat API, conducting complex research becomes easier than ever before. The thing is that working with it you don’t even have to know how an API actually works. Serpstat has created several documents with scripts already implemented there. It means that all you need to do is to enter your token and create your request. This document allows you to take advantage of all the Serpstat API methods in one place. This API includes domain analysis, URL analysis, and keyword research features. It provides 17 reports on competitors, domain history, top pages, related keywords, missing phrases, and more. For example, if you want to know your competitors’ domains, you can do it in several clicks without spending limits on analyzing each website separately. Here’s a step-by-step instruction on how you can do that.

Step 1: Generate your token in your Serpstat account. Starting from Plan B (69$ a month), every user has access to the API. If you don’t have one, contact the support team via live chat to discuss options. Step 2: Open the document and make a copy of it. Step 3: Enter your token into the cell. Step 4: Select a database from a dropdown list. Step 5: Enter a list of your competitors’ domains. Step 6: Choose domains > Domain info report in Serpstat tab Step 7: Watch the following results

2. Content curationKnowing the most trending topics and articles is the basic thing everyone who wants to attract their target audience needs to know. Moreover, tracking your content performance helps publishers improve their strategies to drive higher traffic and engagement. As blog owners usually run lots of documentation to provide reports on their marketing results, integrating tracking tools into their own applications is extremely useful and helps save time a lot. The Buzzsumo API provides a wide range of filters. Applying them, you’ll get highly specific reports that handle content creation processes for you. You can not only analyze your pages but also see your competitors’ top articles. Such reports will help you come up with the most engaging types of content. Its standard API has five resources:

Links shared API request and response examples:

3. Monitor backlinksBacklink analysis is another essential part of SEO. This process helps people see their link profiles’ weak points and discover new link building opportunities. To integrate your applications with backlink analysis reports, you can use Majestic API. It’s available on Platinum and API plans. The full API lets you discover the following information:

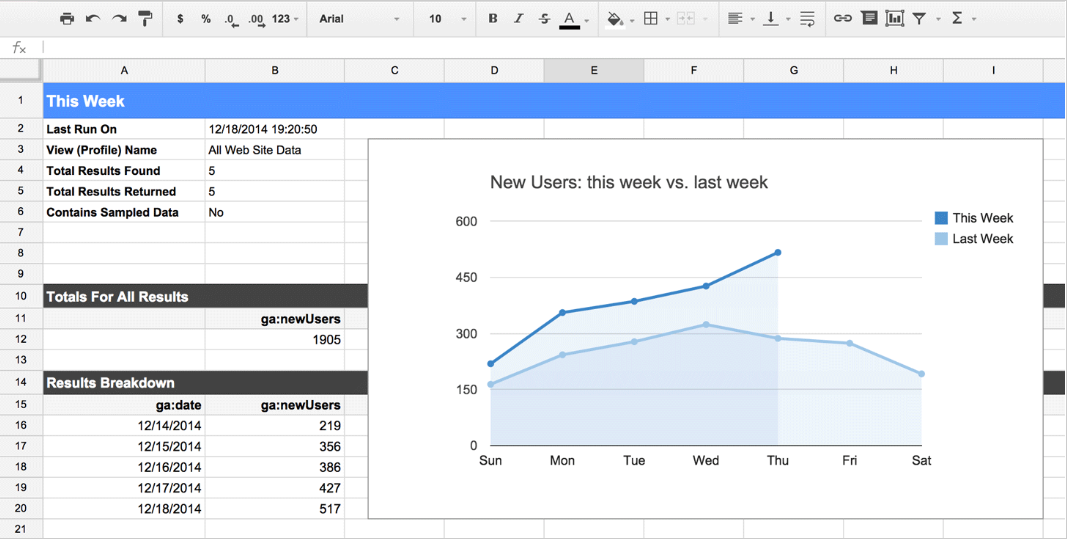

4. Get performance metricsRunning a website and not analyzing the results it gives is a complete waste of time. So, it’s pretty difficult to find a person who owns a website and doesn’t have a Google Analytics account. The tool provides you with a deeper understanding of your audience, evaluate your marketing performance, and helps you find out what tactics that are working the best. However, accessing this data via the tool itself isn’t always convenient. Website owners often need to build custom dashboards and integrate their analytics reports with their business applications. For instance, if you want to create a KPI dashboard for your marketing team, integrating Google Sheets with Google Analytics will be the best decision. The Google Analytics reporting API allows users with the following:

To combine the power of Google Analytics API with the power of data operation in Google Sheets, use the Google Analytics spreadsheet add-on. It’ll let you compute custom calculations, schedule reports creation, share the data with your team, visualize your reports and embed them to other websites. To install the add-on, read the step-by-step instruction by Google Developers.

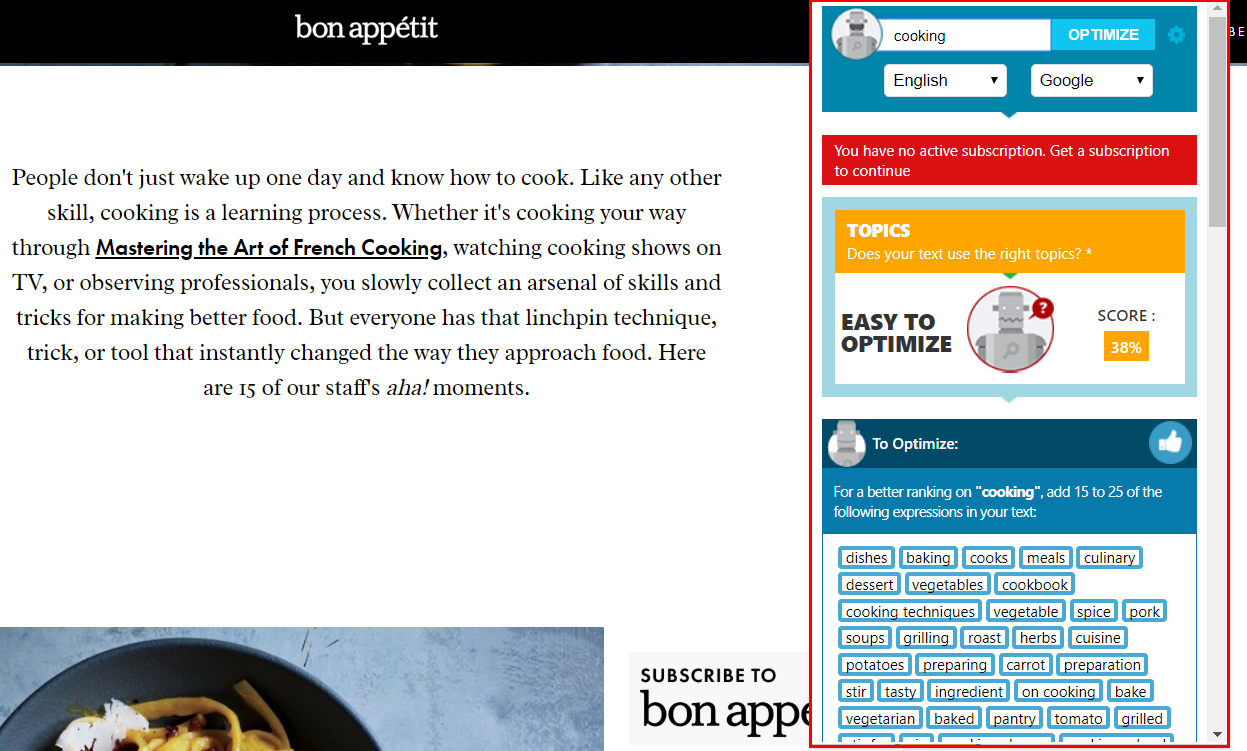

Bonus: More ways to optimize SEO processesAPIs are extremely useful when you deal with vast data. And what if you need to get the results here and now? In these cases, browser extensions will be handy. They help quickly analyze your page, find technical issues, or research keywords without switching between the page and the tools. I’ll share four free SEO extensions for Google Chrome that are essential for marketers. 1. SEO TextOptimizerIf you deal with content, you probably know this tool already. If not, it’s the right time to start using it. SEO TextOptimizer measures the quality of your content based on the topic and the words you use in the text. All you need to do is enter your main keyword into its search field. The extension will show you the optimization score with the words you’d better add or remove from the article.

2. Serpstat SEO & Website Analysis PluginThis SEO extension lets Serpstat users conduct SEO analysis in one click. With it, you can analyze your competitors, get your site’s top-10 keywords, get the data on domain’s traffic, see its visibility trend for a year, and more. Serpstat SEO & Website Analysis Plugin has three tabs (Page Analysis, On-page SEO Parameters, and Domain Analysis) providing detailed information on each aspect.

3. SEOquakeThis extension is an interactive SEO dashboard with the SEO overview, backlink report, and other important metrics. However, its best feature is SERP analysis. It means that when searching for the query, you’ll see the bar providing the most crucial domain data below each search result. With SEOquake, you’ll get the following metrics without even clicking through the page:

4. WoorankThe SEO and website analysis extension by Woorank is great for a quick analysis of the page’s SEO issues. It identifies crawl errors, usability, mobile friendliness, local directories, and more. The extension evaluates the total score of your marketing efforts and prioritizes all the issues for you to solve.

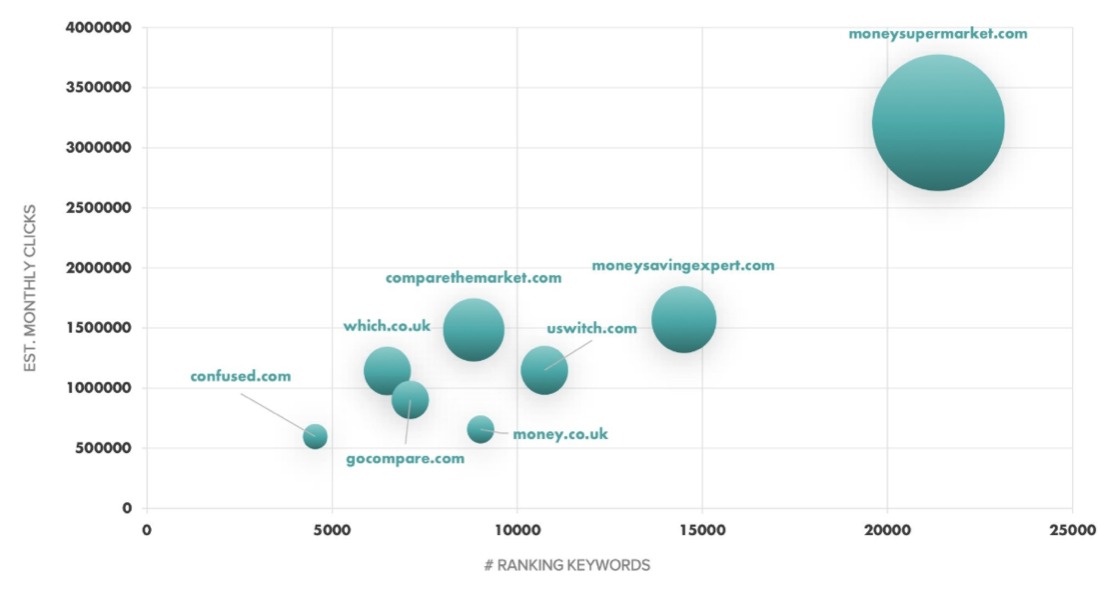

Optimize your working process for more effectivenessThe more tasks you have to solve, the more difficult it is to manage your time. Don’t limit yourself to SEO tools’ interfaces with the standard set of functions. Implement new methods into your SEO analysis processes to become more productive and save your time on manual work. Tell us which of these tools have helped you save on precious productive time! Leave a comment below. Inna Yatsyna is a Brand and Community Development Specialist at Serpstat. She can be found on Twitter @erin_yat. The post How to speed up SEO analysis: API advantages for SEO experts appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/03/04/how-to-speed-up-seo-analysis-api-advantages-for-seo-experts/ As most of you know the aggregator market is a competitive one, with the popularity in comparison sites rising. Comparison companies are some of the most well-known and commonly used brands today. With external marketing and advertising efforts at an all-time high, people turn to the world wide web for these services. So, who is championing the online market? In an investigation, we carried out we found brands such as MoneySuperMarket and MoneySavingExpert are the kings of the organic market.

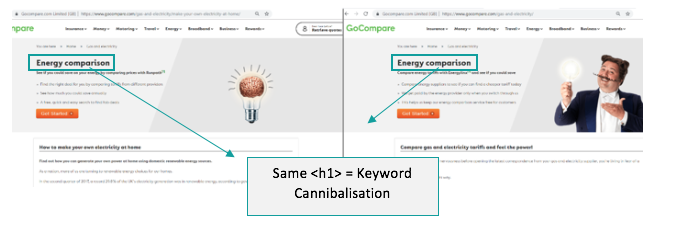

It’s hard to remember a world without comparison sites. It turns out that comparison websites have been around for quite some time. In fact, some of the most popular aggregator domain names have been available since 1999. Yes 1999, two years after Google launched. Think back to 1999 and how SEO has adapted. Now think about what websites have had to consider, keeping up with high customer demands, new functionality, site migrations, introduction to JavaScript, site speed, the list is endless. The lifespan and longevity of these sites mean that over time issues start to build up, especially in the technical SEO department. Many of us SEOs are aware of the benefits that come with spending time on technical SEO issues — not to mention the great return on investment. As comparison sites are so popular and relied upon by users, simple technical issues can result in a poor user experience damaging customer relationships. Or worse, users seeking assistance elsewhere. Running comparison crawls have identified the common technical SEO issues across the market leaders. Find out what these issues are and how they will be harming their SEO — and see if they correlate with your own website. 1. Keyword cannibalizationWhen developing and creating new pages it is easy to forget about keyword cannibalization. Duplicating templates can easily leave metadata and headings unchanged, all confusing search engines on which page to rank for that keyword. Here is an example from GoCompare.

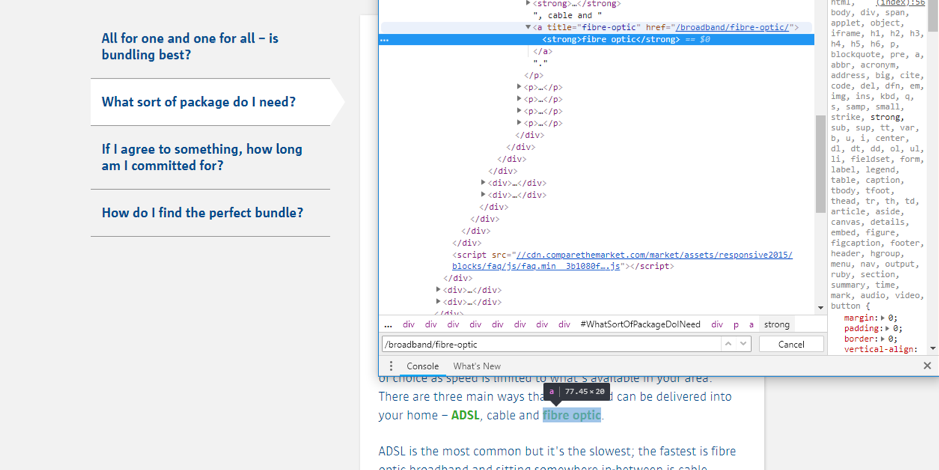

The page on the left has the cannibalizing first heading. This is because the page’s h1 is situated in the top banner. This should target the long-tail opportunity “how to make your own electricity at home” which has been placed in an h2 tag directly under the banner. The best course of action here would be to tweak the template, removing the banner and placing the call to action in the article body and placing the targeted keyword in a first heading tag. Comparison sites are prime candidates for keyword cannibalization with the duplication of templates, services, and offers which results in cannibalization issues sitewide. The fixRun a crawl of your domain, gathering all the duplicated first headings tags, you can use tools such as Sitebulb for this. Decipher between which is the original page and which is the duplicate, then gather your keyword data to find a better keyword alternative for that duplicate page. Top tipTalk to your SEO expert when creating new pages, they will be able to provide recommendations on URL structure, first headings, and titles. It is worth having an SEO at the start of the planning process when rolling out new pages. 2. Internal redirectsNumerous changes can result in internal redirects, primary causes are redundant pages, upgrades to a site’s functionality, and furthermore, the dreaded site migration. When Google urged sites to accelerate to HTTPs in January 2017, with the ideal methodology to 301 redirect HTTP pages to HTTPs, it’s painful to think about the mass number of internal redirects. Here’s an example.

Comparison sites specifically need to be aware of this. Just like ecommerce sites, products and services become unavailable. The normal behavior seems to be to then to redirect that product either to an alternative page or, in most cases, back to the parent directory. This can then cause internal redirects across the site that need immediate attention. The fixTo tackle this issue, gather all the internal redirected URLs from your crawler. Once you’ve done this find the link on the parent page by inspecting the page on Google Developer tools. Find where the link is and recommend to your development team that it changes the href attribute target within the link anchor to the final destination of the redirect. 3. Cleaning up the sitemapWith loads of changes happening across aggregator sites all the time, it is likely that the sitemap gets neglected. However, it’s imperative you don’t allow this to happen! Search engines such as Google might ignore sitemaps that return “invalid” URLs. Here’s an example.

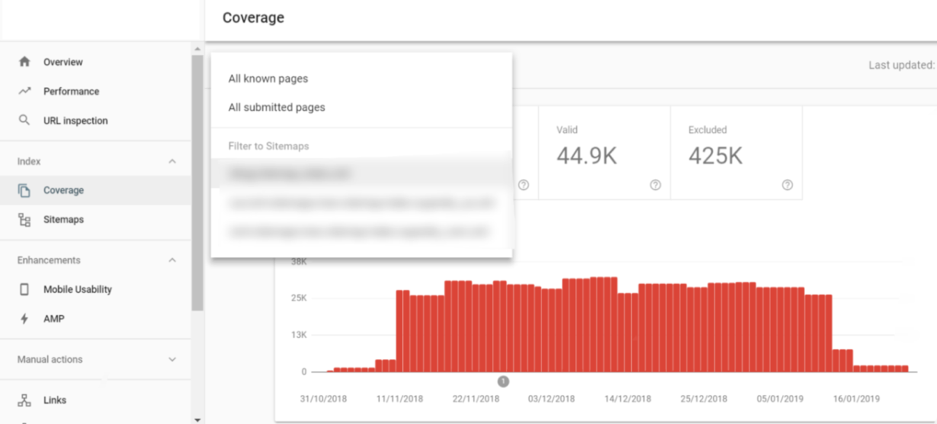

Usually, a site’s 400/500 status code pages are on the development teams’ radar to fix. However, it isn’t always best practice as that these pages still sit in the sitemap. As they might be set live, orphaned and no indexed, or redirected elsewhere, that leaves some less severe issues within the Sitemap file. Aggregators currently have to deal with sites changing product ranges, releasing new and, even, discontinuing services on a regular basis. New pages, therefore, have to be set up, redirects are then applied and sometimes issues are missed. The fixFirst, you need to identify errors within the sitemap. Search Console is perfect for this. Go to the coverage section, and filter with the drop down. Select your sitemaps with “Filter to Sitemaps” to inspect the errors that are within these.

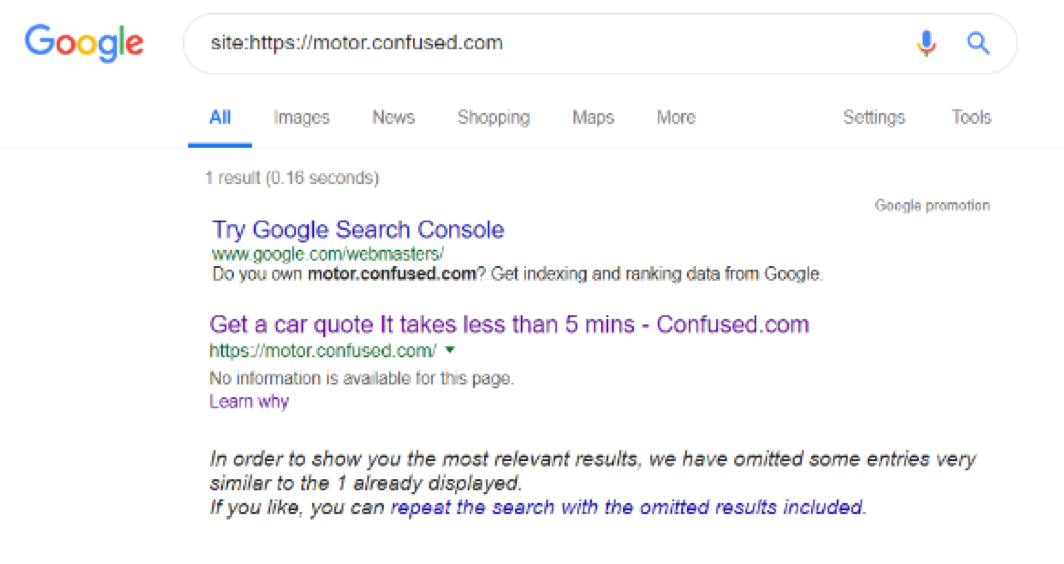

If your sitemap has 400 or 500 status code pages, then this is more of a priority, if it has the odd redirect or canonical issue, focus on sorting these out first. Top tipCheck your sitemap weekly or even more frequently. It is also a great way of checking your broken pages across the site. 4. Subdomains are causing index bloatBehind any great comparison site is a quotation functionality. This allows users to place personal information about a quote and being able to revisit previously saved data kind of like a shopping cart on most ecommerce websites. However, these are usually hosted on subdomains and can get indexed, which you don’t really want. These are mostly thin content pages, a useless page in Google index equaling index bloat. Here’s an example.

The fixThe solution is to add the “noindex” meta attribute to the quotation domains to stop them from being indexed. You can also include the subdomains in your robots.txt file to stop them from being crawled. Just make sure they aren’t in the search engines’ index before you place them in the file as they won’t drop out of the SERPs. 5. Spreading link equity to irrelevant pagesInternal linking is important. However, passing link equity thinly across pages can cause a loss in value. Think of a pyramid, and how the homepage spreads equity to the directory and then down to the subdirectories through keyword targeted anchor text.

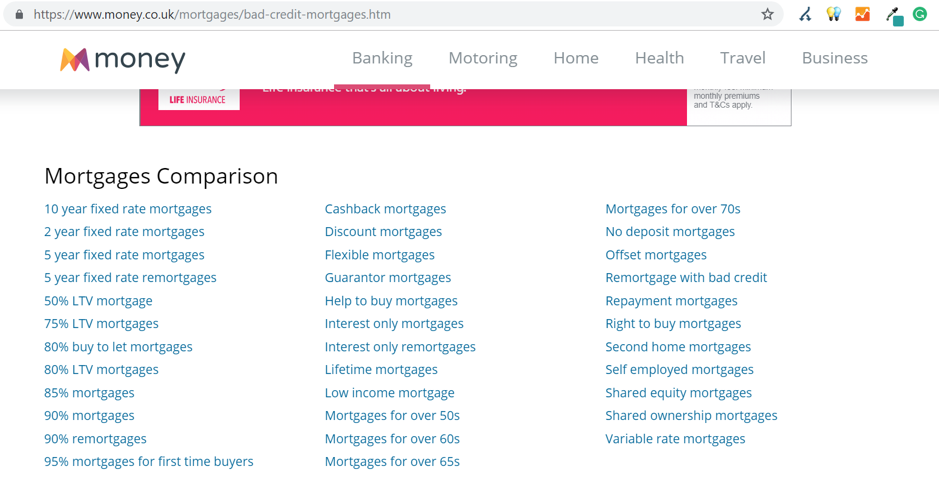

These pages where equity is passed should hold the value and only link out to relevant pages that might be of relevance. As comparison sites target a range of products and opportunities it is important to include them within the site architecture, but not spread the equity thinly. How do we do this?1. Consider the architecture of your site. For example: “Fixed rate mortgages” has different yearly offerings, most sites sit these under a mortgage subdirectory, but this could easily have its own directory. This would benefit the site architecture as it lowers the click depth for those important pages and stops the thin spread of equity. 2. Only link to what is relevant. Let’s take the below example. The targeted keyword here is “bad credit mortgages.” Money.co.uk then supplies a load of internal links at the bottom of the page that aren’t relevant to the keyword intent. Therefore, the equity is spread to these pages resulting in the page losing value.

The fixReview the internal linking structure. You can do this by running pages through Screaming Frog, which identifies pages that have a click depth greater than two and evaluates the outgoing links. If there are a lot, this could be a good indicator that pages might be spreading the equity thinly. Manually evaluate the pages to find there the links are going to and remove any that might be irrelevant spreading equity unnecessarily. 6. Orphaned pagesFollowing on from the above point, pages that are orphaned, or poorly linked to, will receive low equity. Comparison sites are prime candidates for this. MoneySuperMarket has several orphaned pages, especially located in the blog section of the site.

The fixUse Sitebulb to crawl the site and discover orphaned pages. Spend time evaluating these, it might be that these pages should be orphaned. However, if they are present in the sitemap that indicates either one of two problems given below.

If the pages are redundant, make them “no indexable.” However, if they should be linked to, evaluate your site’s internal architecture to work out a perfect linking strategy for these pages. Top tipIt is very easy for blog posts to get orphaned, using methods such as topic clustering can help benefit your content marketing efforts while making sure your pages aren’t being orphaned. Last ditch tipsA lot of these issues occur across a range of different sites and many sectors, as comparison sites undergo a lot of changes and development work with a vast product range and loads to aggregate. It is very hard to keep up-to-date with SEO tech issues. Be vigilant and delegate resources sensibly. SEO tech issues shouldn’t be ignored, actively monitor and run crawls and checks after any site development work has been rolled out, this can save your organic performance and keep your technical SEO game strong. Tom Wilkinson is Search & Data Lead at Zazzle Media. The post Common technical SEO issues and fixes for aggregators and finance brands appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/03/04/common-technical-seo-issues-and-fixes-for-aggregators-and-finance-brands/ Automating the process of narrowing down site traffic issues with Python gives you the opportunity to help your clients recover fast. This is the second part of a three-part series. In part one, I introduced our approach to nail down the pages losing traffic. We call it the “winners vs losers” analysis. If you have a big site, reviewing individual pages losing traffic as we did on part one might not give you a good sense of what the problem is. So, in part two we will create manual page groups using regular expressions. If you stick around to read part three, I will show you how to group pages automatically using machine learning. You can find the code used in part one, two and three in this Google Colab notebook. Let’s walk over part two and learn some Python. Incorporating redirectsAs the site our analyzing moved from one platform to another, the URLs changed, and a decent number of redirects were put in place. In order to track winners and losers more accurately, we want to follow the redirects from the first set of pages. We were not really comparing apples to apples in part one. If we want to get a fully accurate look at the winners and losers, we’ll have to try to discover where the source pages are redirecting to, then repeat the comparison. 1. Python requestsWe’ll use the requests library which simplifies web scraping, to send an HTTP HEAD request to each URL in our Google Analytics data set, and if it returns a 3xx redirect, we’ll record the ultimate destination and re-run our winners and losers analysis with the correct, final URLs. HTTP HEAD requests speed up the process and save bandwidth as the web server only returns headers, not full HTML responses. Below are two functions we’ll use to do this. The first function takes in a single URL and returns the status code and any resulting redirect location (or None if there isn’t a redirect.) The second function takes in a list of URLs and runs the first function on each of them, saving all the results in a list. View the code on Gist. This process might take a while (depending on the number of URLs). Please note that we introduce a delay between requests because we don’t want to overload the server with our requests and potentially cause it to crash. We also only check for valid redirect status codes 301, 302, 307. It is not wise to check the full range as for example 304 means the page didn’t change. Once we have the redirects, however, we can repeat the winners and losers analysis exactly as before. 2. Using combine_firstIn part one we learned about different join types. We first need to do a left merge/join to append the redirect information to our original Google Analytics data frame while keeping the data for rows with no URLs in common. To make sure that we use either the original URL or the redirect URL if it exists, we use another data frame method called combine_first() to create a true_url column. For more information on exactly how this method works, see the combine_first documentation. We also extract the path from the URLs and format the dates to Python DateTime objects. View the code on Gist. 3. Computing totals before and after the switchView the code on Gist. 4. Recalculating winners vs losersView the code on Gist. 5. Sanity checkView the code on Gist. This is what the output looks like.

Using regular expressions to group pagesMany websites have well-structured URLs that make their page types easy to parse. For example, a page with any one of the following paths given below is pretty clearly a paginated category page.

Meanwhile, a path structure like the paths given below might be a product page.

We need a way to categorize these pages based on the structure of the text contained in the URL. Luckily this type of problem (that is, examining structured text) can be tackled very easily with a “Domain Specific Language” known as Regular Expressions or “regex.” Regex expressions can be extremely complicated, or extremely simple. For example, the following regex query (written in python) would allow you to find the exact phrase “find me” in a string of text. regex = r"find me" Let’s try it out real quick.

text = "If you can find me in this string of text, you win! But if you can't find me, you lose"

regex = r"find me"

print("Match index", "\tMatch text")

for match in re.finditer(regex, text):

print(match.start(), "\t\t", match.group())

The output should be: Match index Match text 11 find me 69 find me Grouping by URLNow we make use of a slightly more advanced regex expression that contains a negative lookahead. Fully understanding the following regex expressions is left as an exercise for the reader, but suffice it to say we’re looking for “Collection” (aka “category”) pages and “Product” pages. We create a new column called “group” where we label any rows whose true_url match our regex string accordingly. Finally, we simply re-run our winners and losers’ analysis but instead of grouping by individual URLs like we did before, we group by the page type we found using regex. View the code on Gist. The output looks like this:

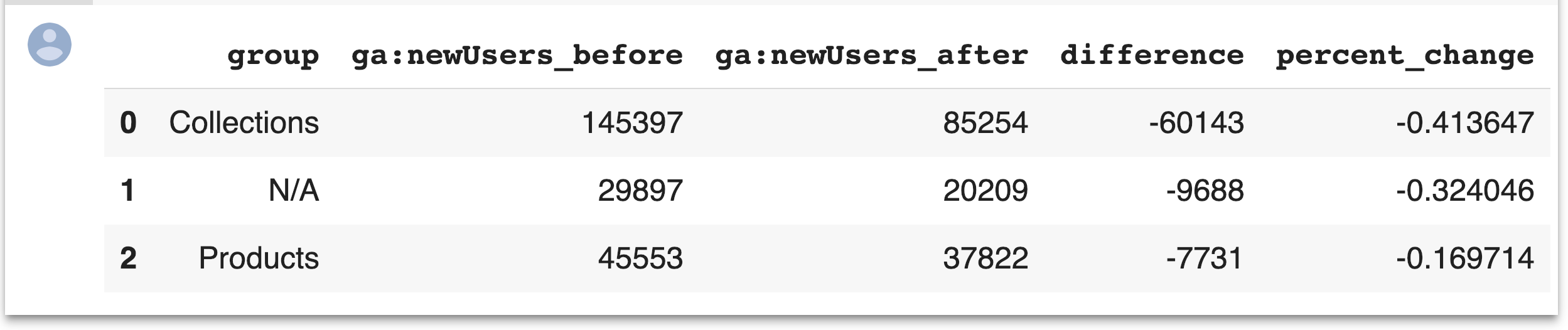

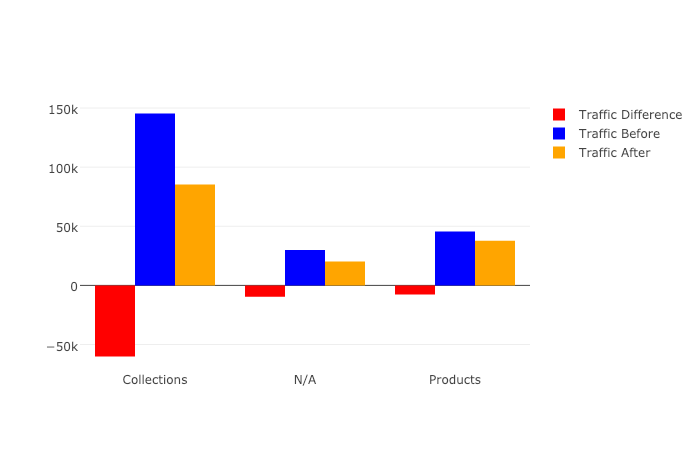

Plotting the resultsFinally, we’ll plot the results of our regex-based analysis, to get a feel for which groups are doing better or worse. We’re going to use an open source plotting library called Plotly to do so. In our first set of charts, we’ll define 3 bar charts that will go on the same plot, corresponding to the traffic differences, data from before, and data from after our cutoff point respectively. We then tell Plotly to save an HTML file containing our interactive plot, and then we’ll display the HTML within the notebook environment. Notice that Plotly has grouped together our bar charts based on the “group” variable that we passed to all the bar charts on the x-axis, so now we can see that the “collections” group very clearly has had the biggest difference between our two time periods. View the code on Gist. We get this nice plot which you can interact within the Jupyter notebook!

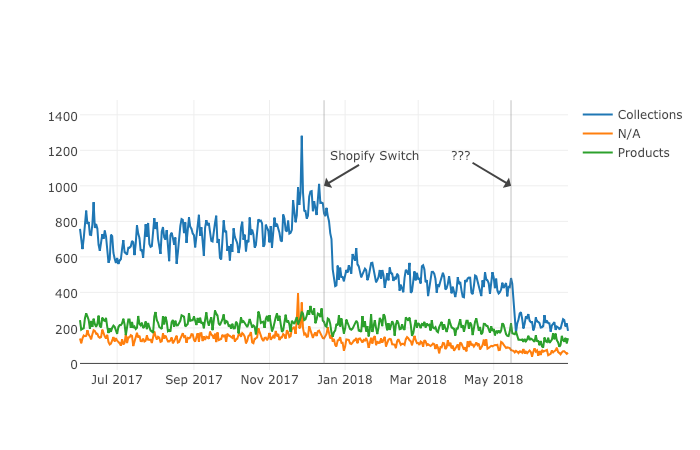

Next up we’ll plot a line graph showing the traffic over time for all of our groups. Similar to the one above, we’ll create three separate lines that will go on the same chart. This time, however, we do it dynamically with a “for loop”. After we create the line graph, we can add some annotations using the Layout parameter when creating the Plotly figure. View the code on Gist. This produces this painful to see, but valuable chart.

ResultsFrom the bar chart and our line graph, we can see two separate events occurred with the “Collections” type pages which caused a loss in traffic. Unlike the uncategorized pages or the product pages, something has gone wrong with collections pages in particular. From here we can take off our programmer hats, and put on our SEO hats and go digging for the cause of this traffic loss, now that we know that it’s the “Collections” pages which were affected the most. During further work with this client, we narrowed down the issue to massive consolidation of category pages during the move. We helped them recreate them from the old site and linked them from a new HTML sitemap with all the pages, as they didn’t want these old pages in the main navigation. Manually grouping pages is a valuable technique, but a lot of work if you need to work with many brands. In part three, the final part of the series, I will discuss a clever technique to group pages automatically using machine learning. Hamlet Batista is the CEO and founder of RankSense, an agile SEO platform for online retailers and manufacturers. He can be found on Twitter @hamletbatista. The post Using Python to recover SEO site traffic (Part two) appeared first on Search Engine Watch. from https://searchenginewatch.com/2019/03/08/using-python-to-recover-seo-site-traffic-part-two/ The ecommerce market is highly competitive, with thousands of small players striving to keep up with the giants like Amazon and eBay. Still, for both leaders and followers, the web store UX stays the factor that defines who wins customers’ hearts (and purses) and who is to leave the stage. UX stays a top priority in ecommerce as the majority of shoppers prefer convenience to a nifty look. According to Shopify, 80 percent of users admit that a poor search experience can make them leave a web store. So, a thought-out catalog navigation system is one of the crucial factors of a web store’s success. Why faceted navigation?Faceted search is probably the most convenient search system to date. It relies on sets of terms structured by relation (aka facets). Users mix and match options (price, color, fabric, brand, and more) to progressively narrow down the search results list until they get a short selection of relevant picks. Apart from being convenient, the faceted search system can tell shoppers more about the products they are looking for. For example, amateur hikers looking for their first waterproof jackets will be surprised to see a variety of membrane types. How to use faceted navigation correctly?Like any tool, faceted navigation should be used carefully if you want to get the most of it and cause no harm to your business. So how to adjust it right? Which and how many facets to use? How to arrange them? These six principles will help you find the answers. 1. Be relevantFor sure, facets should include only the options that shoppers bear in mind when they open a particular category. For example, the shampoo section may include hair type, hair concern, and brand. The only way to make the list of facets complete is to listen carefully to your customers. Your search logs contain commonly search keywords, and even the messages that customers leave to the sales and support teams can give you more information about the search criteria of your target shoppers. To start, you can check the thematic filters that competitors and industry leaders use. 2. Be briefToo few facets are not enough to narrow down the search results’ list but too many options can confuse customers. How to provide an optimal number of filters? Some web stores create groups of facets that can be collapsed/expanded and add a scrollbar within each group. Consider the example of Lookfanstastic. 3. Be convenientIntroduce a block with the selected filters and breadcrumbs. Use bold formatting to remind customers that their search results are limited by the chosen options. And don’t forget about the “Clear all” button to let shoppers quickly start the search from the beginning. One more tip to prevent generating the “No results found” pages (which is harmful to both CX and SEO) is to use AJAX to dynamically update the number of matches next to each facet. |

AuthorPleasure to introduce my self i am Sean Webb i am 27 years old from Manchester, UK.I am doing affiliate marketing and have spend lots of time learning how to rank easy to medium competition keywords. I have recently started PPL and Video Marketing and learning more about it. ArchivesNo Archives Categories |

RSS Feed

RSS Feed